Run LLMs on-device with LiteRT-LM

Production-ready, open-source inference framework designed to deliver high-performance, cross-platform LLM deployments on edge devices.

Why LiteRT-LM?

Cross-platform

Deploy LLMs across Android, iOS, Web, and Desktop.

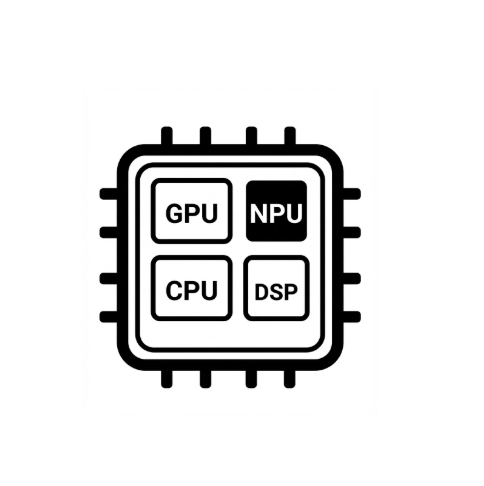

Hardware accelerated

Maximize performance with GPU and NPU acceleration.

Broad GenAI Capabilities

Support for popular LLMs as well as multi-modality (Vision, Audio) and Tool Use.

Join the Community

LiteRT-LM on GitHub

Contribute to the open-source project, report issues, and see examples.

Hugging Face

Download pre-converted models (Gemma, Qwen and more), and join the discussion.

Blogs and Announcements

Bring state-of-the-art agentic skills to the edge with Gemma 4.

Deploy Gemma 4 in-app and across a broader range of devices with stellar performance and reach using LiteRT-LM.

On-device GenAI in Chrome, Chromebook Plus, and Pixel Watch

Deploy language models on wearables and browser-based platforms using LiteRT-LM at scale.

On-device function calling in Google AI Edge Gallery

Explore how to fine-tune FunctionGemma and enable function calling capabilities powered by LiteRT-LM Tool Use APIs.

Google AI Edge small language models, multimodality, and function calling

Latest insights on RAG, multimodality, and function calling for edge language models.