Implementa la IA en aplicaciones web, para dispositivos móviles y en aplicaciones integradas

-

En dispositivo

Reduce la latencia. Trabajar sin conexión Mantén tus datos locales y privados.

-

Multiplataforma

Ejecuta el mismo modelo en Android, iOS, la Web y en dispositivos incorporados.

-

Multiframework

Compatible con modelos de JAX, Keras, PyTorch y TensorFlow.

-

Pila completa de IA perimetral

Frameworks flexibles, soluciones llave en mano y aceleradores de hardware

Soluciones listas para usar y frameworks flexibles

APIs de bajo código para tareas comunes de IA

APIs multiplataforma para abordar tareas comunes de IA generativa, visión, texto y audio.

Comienza a usar tareas de MediaPipeImplementa modelos personalizados multiplataforma

Ejecuta modelos de JAX, Keras, PyTorch y TensorFlow de manera eficiente en Android, iOS, la Web y dispositivos integrados, optimizados para la IA generativa y el AA tradicional.

Comienza a usar LiteRT

Acorta los ciclos de desarrollo con la visualización

Visualiza la transformación de tu modelo a través de la conversión y la cuantificación. Superpone los resultados de las comparativas para depurar los hotspots.

Comienza a usar el Explorador de modelos

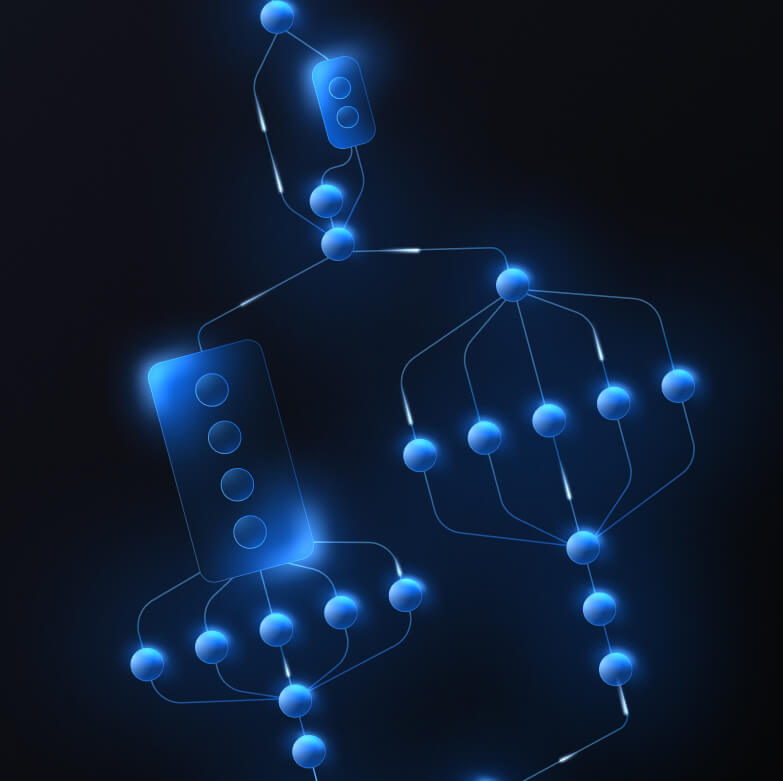

Crea canalizaciones personalizadas para atributos de AA complejos

Crea tu propia tarea encadenando de forma eficiente varios modelos de AA junto con la lógica de procesamiento previo y posterior. Ejecuta canalizaciones aceleradas (GPU y NPU) sin bloquear la CPU.

Comienza a usar MediaPipe Framework

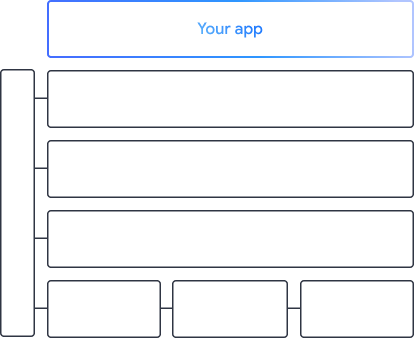

Las herramientas y los frameworks que potencian las apps de Google

Explora la pila de IA de borde completa, con productos en todos los niveles, desde APIs de poco código hasta bibliotecas de aceleración específicas para el hardware.

MediaPipe Tasks

MediaPipe Tasks

Compila funciones de IA rápidamente en apps web y para dispositivos móviles con APIs de poco código para tareas comunes que abarcan IA generativa, visión artificial, texto y audio.

IA generativa

Integra modelos de lenguaje y de imágenes generativos directamente en tus apps con APIs listas para usar.

Vision

Explora una amplia variedad de tareas de visión que abarcan la segmentación, la clasificación, la detección, el reconocimiento y los puntos de referencia corporales.

Texto y audio

Clasifica el texto y el audio en muchas categorías, como el idioma, el sentimiento y tus propias categorías personalizadas.

Comenzar

Framework de MediaPipe

Framework de MediaPipe

Es un framework de bajo nivel que se usa para compilar canalizaciones de AA aceleradas de alto rendimiento, que a menudo incluyen varios modelos de AA combinados con procesamiento previo y posterior.

LiteRT

LiteRT

Implementa modelos de IA creados en cualquier framework en dispositivos móviles, web y microcontroladores con una aceleración específica del hardware optimizada.

Multiframework

Convierte modelos de JAX, Keras, PyTorch y TensorFlow para que se ejecuten en el perímetro.

Multiplataforma

Ejecuta el mismo modelo exacto en Android, iOS, la Web y microcontroladores con SDKs nativos.

Ligero y rápido

El entorno de ejecución eficiente de LiteRT ocupa solo unos pocos megabytes y permite la aceleración de modelos en CPU, GPU y NPU.

Comenzar

Explorador de modelos

Explorador de modelos

Explora, depura y compara tus modelos de forma visual. Superpone comparativas y datos numéricos de rendimiento para identificar los hotspots problemáticos.

Gemini Nano en Android y Chrome

Crea experiencias de IA generativa con el modelo integrado en el dispositivo más potente de Google