Generación de imágenes con Nano Banana

- Prueba una app de Nano Banana 2

- O bien, crea tu propia rutina a partir de instrucciones:

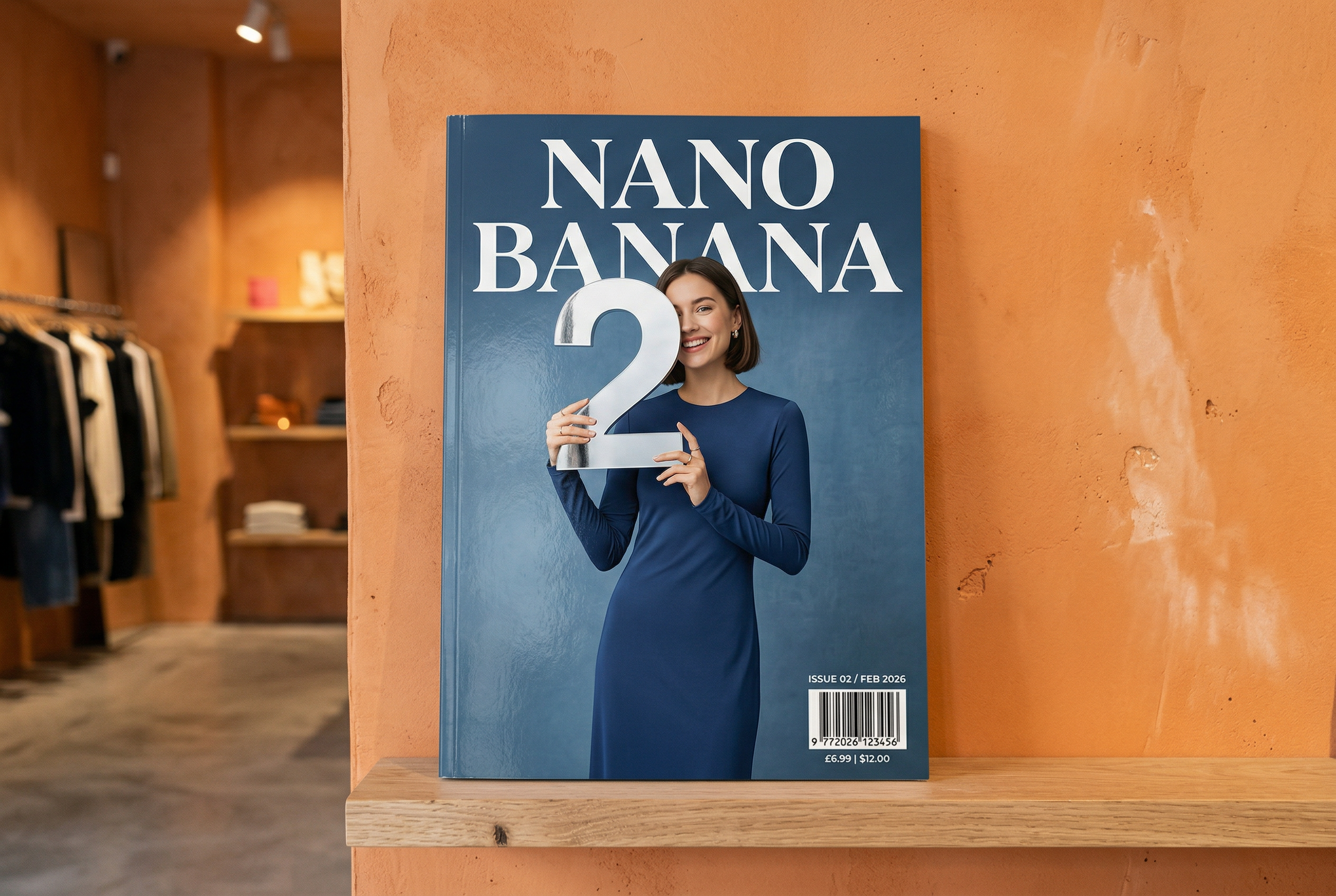

-

Generado por Nano Banana 2 Instrucción: "Una foto de la portada brillante de una revista. La portada azul minimalista tiene las palabras Nano Banana en letras grandes y negritas. El texto está en una fuente serif y llena la vista. No hay otro texto. Delante del texto, hay un retrato de una persona con un vestido elegante y minimalista. Ella sostiene de forma juguetona el número 2, que es el punto focal.

Coloca el número de problema y la fecha "Feb 2026" en la esquina junto con un código de barras. La revista está en una estantería contra una pared de yeso naranja, dentro de una tienda de diseño". -

Generado por Nano Banana Pro Instrucción: "Presenta una escena de dibujos animados en 3D isométrica y en miniatura de Londres, clara y con vista superior a 45°, en la que se muestren sus monumentos y elementos arquitectónicos más emblemáticos. Usa texturas suaves y refinadas con materiales PBR realistas, e iluminación y sombras suaves y realistas. Integra las condiciones climáticas actuales directamente en el entorno de la ciudad para crear un ambiente atmosférico envolvente. Usa una composición limpia y minimalista con un fondo suave de color sólido. En la parte superior central, coloca el título "Londres" en texto grande y en negrita, un ícono del clima destacado debajo, luego la fecha (texto pequeño) y la temperatura (texto mediano). Todo el texto debe estar centrado con un espaciado uniforme y puede superponerse sutilmente en la parte superior de los edificios".Obtén más información sobre la fundamentación en la Búsqueda y pruébala en AI Studio -

Generado por Nano Banana 2 Instrucción: "Usa la búsqueda con imágenes para encontrar imágenes precisas de un quetzal resplandeciente. Crea un hermoso fondo de pantalla de 3:2 de este pájaro, con un degradado natural de arriba hacia abajo y una composición minimalista".Usa la fundamentación de la Búsqueda de imágenes de Google con Nano Banana 2. Pruébalo en AI Studio -

Generado por Nano Banana Pro Instrucción: "Coloca este logotipo en un anuncio refinado para un perfume con aroma a banana. El logotipo está perfectamente integrado en la botella".Prueba la preservación de detalles de alta fidelidad de Nano Banana en AI Studio -

Generado por Nano Banana Pro Mensaje: "Una foto de una escena cotidiana en una cafetería concurrida que sirve el desayuno. En primer plano, se ve un hombre de anime con cabello azul. Una de las personas es un boceto a lápiz y otra es una persona de plastilina".Experimenta con diferentes estilos artísticos con Nano Banana en AI Studio -

Generado por Nano Banana Pro Instrucción: "Usa la búsqueda para averiguar cómo se recibió el lanzamiento de Gemini 3 Flash. Usa esta información para escribir un artículo breve sobre el tema (con encabezados). Devuelve una foto del artículo tal como apareció en una revista brillante centrada en el diseño. Es una foto de una sola página doblada, en la que se muestra el artículo sobre Gemini 3 Flash. Una foto principal El título está en serif". -

Generado por Nano Banana Pro Instrucción: "Un ícono que representa un perro lindo. El fondo es blanco. Crea los íconos con un estilo 3D táctil y colorido. No hay texto".Crea íconos, calcomanías y recursos con Nano Banana en AI Studio -

Generado por Nano Banana 2 Instrucción: "Crea una foto que sea perfectamente isométrica. No es una miniatura, sino una foto capturada que resultó ser perfectamente isométrica. Es una foto de un hermoso jardín moderno. Hay una piscina grande en forma de 2 y las palabras: Nano Banana 2".Prueba la generación de imágenes fotorrealistas en AI Studio

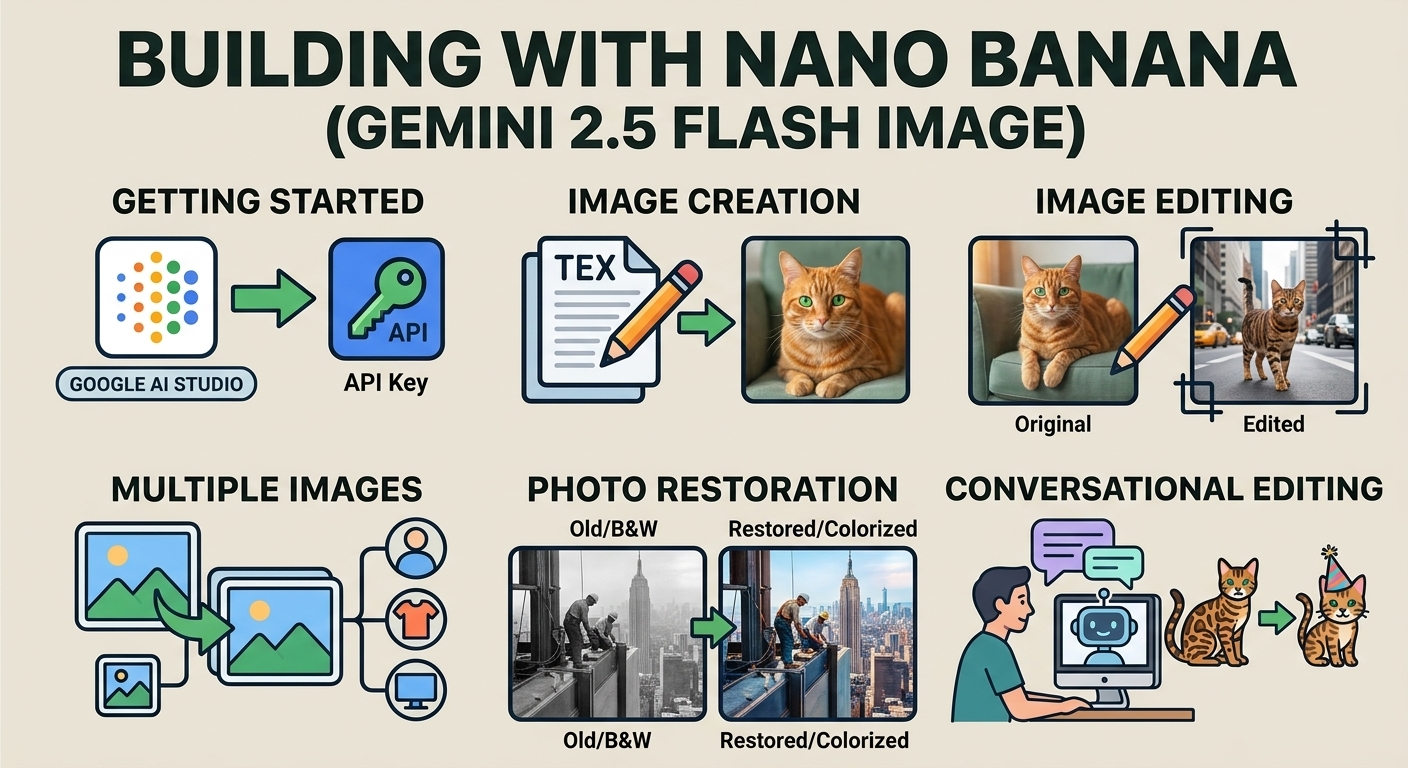

Nano Banana es el nombre de las capacidades nativas de generación de imágenes de Gemini. Gemini puede generar y procesar imágenes de forma conversacional con texto, imágenes o una combinación de ambos. Esto te permite crear, editar y realizar iteraciones en imágenes con un control sin precedentes.

Nano Banana hace referencia a tres modelos distintos disponibles en la API de Gemini:

- Nano Banana 2: Es el modelo Gemini 3.1 Flash Image (

gemini-3.1-flash-image). Este modelo funciona como la contraparte de alta eficiencia de Gemini 3.1 Pro Image, optimizado para la velocidad y los casos de uso de gran volumen para desarrolladores. - Nano Banana Pro: Es el modelo Gemini 3.1 Pro Image (

gemini-3-pro-image). Este modelo está diseñado para la producción de recursos profesionales, ya que utiliza un razonamiento avanzado ("Pensamiento") para seguir instrucciones complejas y renderizar texto de alta fidelidad. - Nano Banana: Es el modelo Gemini 2.5 Flash Image (

gemini-2.5-flash-image). Este modelo está diseñado para la velocidad y la eficiencia, y se optimizó para tareas de alto volumen y baja latencia.

Todas las imágenes generadas incluyen una marca de agua de SynthID.

Generación de imágenes (texto a imagen)

Python

from google import genai

from google.genai import types

from PIL import Image

client = genai.Client()

prompt = ("Create a picture of a nano banana dish in a fancy restaurant with a Gemini theme")

response = client.models.generate_content(

model="gemini-3.1-flash-image",

contents=[prompt],

)

for part in response.parts:

if part.text is not None:

print(part.text)

elif part.inline_data is not None:

image = part.as_image()

image.save("generated_image.png")

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const prompt =

"Create a picture of a nano banana dish in a fancy restaurant with a Gemini theme";

const response = await ai.models.generateContent({

model: "gemini-3.1-flash-image",

contents: prompt,

});

for (const part of response.candidates[0].content.parts) {

if (part.text) {

console.log(part.text);

} else if (part.inlineData) {

const imageData = part.inlineData.data;

const buffer = Buffer.from(imageData, "base64");

fs.writeFileSync("gemini-native-image.png", buffer);

console.log("Image saved as gemini-native-image.png");

}

}

}

main();

Go

package main

import (

"context"

"fmt"

"log"

"os"

"google.golang.org/genai"

)

func main() {

ctx := context.Background()

client, err := genai.NewClient(ctx, nil)

if err != nil {

log.Fatal(err)

}

result, _ := client.Models.GenerateContent(

ctx,

"gemini-3.1-flash-image",

genai.Text("Create a picture of a nano banana dish in a " +

" fancy restaurant with a Gemini theme"),

)

for _, part := range result.Candidates[0].Content.Parts {

if part.Text != "" {

fmt.Println(part.Text)

} else if part.InlineData != nil {

imageBytes := part.InlineData.Data

outputFilename := "gemini_generated_image.png"

_ = os.WriteFile(outputFilename, imageBytes, 0644)

}

}

}

Java

import com.google.genai.Client;

import com.google.genai.types.GenerateContentConfig;

import com.google.genai.types.GenerateContentResponse;

import com.google.genai.types.Part;

import java.io.IOException;

import java.nio.file.Files;

import java.nio.file.Paths;

public class TextToImage {

public static void main(String[] args) throws IOException {

try (Client client = new Client()) {

GenerateContentConfig config = GenerateContentConfig.builder()

.responseModalities("TEXT", "IMAGE")

.build();

GenerateContentResponse response = client.models.generateContent(

"gemini-3.1-flash-image",

"Create a picture of a nano banana dish in a fancy restaurant with a Gemini theme",

config);

for (Part part : response.parts()) {

if (part.text().isPresent()) {

System.out.println(part.text().get());

} else if (part.inlineData().isPresent()) {

var blob = part.inlineData().get();

if (blob.data().isPresent()) {

Files.write(Paths.get("_01_generated_image.png"), blob.data().get());

}

}

}

}

}

}

C#

using Google.GenAI;

using System;

using System.Collections.Generic;

using System.IO;

using System.Threading.Tasks;

public class TextToImage {

public static async Task Main(string[] args) {

var client = new Client();

var response = await client.Models.GenerateContentAsync(

model: "gemini-3.1-flash-image",

contents: new List<Part>

{

new Part { Text = "Create a picture of a nano banana dish in a fancy restaurant with a Gemini theme" }

}

);

foreach (var candidate in response.Candidates) {

foreach (var part in candidate.Content.Parts) {

if (part.Text != null) {

Console.WriteLine(part.Text);

} else if (part.InlineData != null) {

var imageBytes = Convert.FromBase64String(part.InlineData.Data);

await File.WriteAllBytesAsync("generated_image.png", imageBytes);

Console.WriteLine("Image saved as generated_image.png");

}

}

}

}

}

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1/models/gemini-3.1-flash-image:generateContent" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"contents": [{

"parts": [

{"text": "Create a picture of a nano banana dish in a fancy restaurant with a Gemini theme"}

]

}]

}'

Edición de imágenes (texto y de imagen a imagen)

Recordatorio: Asegúrate de tener los derechos necesarios de las imágenes que subas. No generes contenido que infrinja los derechos de otras personas, incluidos videos o imágenes que engañen, hostiguen o dañen. El uso de este servicio de IA generativa está sujeto a nuestra Política de Uso Prohibido.

Proporciona una imagen y usa instrucciones de texto para agregar, quitar o modificar elementos, cambiar el estilo o ajustar la corrección de color.

En el siguiente ejemplo, se muestra cómo subir imágenes codificadas en base64.

Para obtener información sobre varias imágenes, cargas útiles más grandes y tipos de MIME admitidos, consulta la página Comprensión de imágenes.

Python

from google import genai

from google.genai import types

from PIL import Image

client = genai.Client()

prompt = (

"Create a picture of my cat eating a nano-banana in a "

"fancy restaurant under the Gemini constellation",

)

image = Image.open("/path/to/cat_image.png")

response = client.models.generate_content(

model="gemini-3.1-flash-image",

contents=[prompt, image],

)

for part in response.parts:

if part.text is not None:

print(part.text)

elif part.inline_data is not None:

image = part.as_image()

image.save("generated_image.png")

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const imagePath = "path/to/cat_image.png";

const imageData = fs.readFileSync(imagePath);

const base64Image = imageData.toString("base64");

const prompt = [

{ text: "Create a picture of my cat eating a nano-banana in a" +

"fancy restaurant under the Gemini constellation" },

{

inlineData: {

mimeType: "image/png",

data: base64Image,

},

},

];

const response = await ai.models.generateContent({

model: "gemini-3.1-flash-image",

contents: prompt,

});

for (const part of response.candidates[0].content.parts) {

if (part.text) {

console.log(part.text);

} else if (part.inlineData) {

const imageData = part.inlineData.data;

const buffer = Buffer.from(imageData, "base64");

fs.writeFileSync("gemini-native-image.png", buffer);

console.log("Image saved as gemini-native-image.png");

}

}

}

main();

Go

package main

import (

"context"

"fmt"

"log"

"os"

"google.golang.org/genai"

)

func main() {

ctx := context.Background()

client, err := genai.NewClient(ctx, nil)

if err != nil {

log.Fatal(err)

}

imagePath := "/path/to/cat_image.png"

imgData, _ := os.ReadFile(imagePath)

parts := []*genai.Part{

genai.NewPartFromText("Create a picture of my cat eating a nano-banana in a fancy restaurant under the Gemini constellation"),

&genai.Part{

InlineData: &genai.Blob{

MIMEType: "image/png",

Data: imgData,

},

},

}

contents := []*genai.Content{

genai.NewContentFromParts(parts, genai.RoleUser),

}

result, _ := client.Models.GenerateContent(

ctx,

"gemini-3.1-flash-image",

contents,

)

for _, part := range result.Candidates[0].Content.Parts {

if part.Text != "" {

fmt.Println(part.Text)

} else if part.InlineData != nil {

imageBytes := part.InlineData.Data

outputFilename := "gemini_generated_image.png"

_ = os.WriteFile(outputFilename, imageBytes, 0644)

}

}

}

Java

import com.google.genai.Client;

import com.google.genai.types.Content;

import com.google.genai.types.GenerateContentConfig;

import com.google.genai.types.GenerateContentResponse;

import com.google.genai.types.Part;

import java.io.IOException;

import java.nio.file.Files;

import java.nio.file.Path;

import java.nio.file.Paths;

public class TextAndImageToImage {

public static void main(String[] args) throws IOException {

try (Client client = new Client()) {

GenerateContentConfig config = GenerateContentConfig.builder()

.responseModalities("TEXT", "IMAGE")

.build();

GenerateContentResponse response = client.models.generateContent(

"gemini-3.1-flash-image",

Content.fromParts(

Part.fromText("""

Create a picture of my cat eating a nano-banana in

a fancy restaurant under the Gemini constellation

"""),

Part.fromBytes(

Files.readAllBytes(

Path.of("src/main/resources/cat.jpg")),

"image/jpeg")),

config);

for (Part part : response.parts()) {

if (part.text().isPresent()) {

System.out.println(part.text().get());

} else if (part.inlineData().isPresent()) {

var blob = part.inlineData().get();

if (blob.data().isPresent()) {

Files.write(Paths.get("gemini_generated_image.png"), blob.data().get());

}

}

}

}

}

}

C#

using Google.GenAI;

using System;

using System.Collections.Generic;

using System.IO;

using System.Threading.Tasks;

public class TextAndImageToImage {

public static async Task Main(string[] args) {

var client = new Client();

var response = await client.Models.GenerateContentAsync(

model: "gemini-3.1-flash-image",

contents: new List<Part>

{

new Part { Text = "Create a picture of my cat eating a nano-banana in a fancy restaurant under the Gemini constellation" },

new Part

{

FileData = new FileData { FileUri = "file:///path/to/cat_image.png" }

}

}

);

foreach (var candidate in response.Candidates) {

foreach (var part in candidate.Content.Parts) {

if (part.Text != null) {

Console.WriteLine(part.Text);

} else if (part.InlineData != null) {

var imageBytes = Convert.FromBase64String(part.InlineData.Data);

await File.WriteAllBytesAsync("gemini_generated_image.png", imageBytes);

Console.WriteLine("Image saved as gemini_generated_image.png");

}

}

}

}

}

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1/models/gemini-3.1-flash-image:generateContent" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H 'Content-Type: application/json' \

-d "{

\"contents\": [{

\"parts\":[

{\"text\": \"'Create a picture of my cat eating a nano-banana in a fancy restaurant under the Gemini constellation\"},

{

\"inline_data\": {

\"mime_type\":\"image/jpeg\",

\"data\": \"<BASE64_IMAGE_DATA>\"

}

}

]

}]

}"

Edición de imágenes de varios turnos

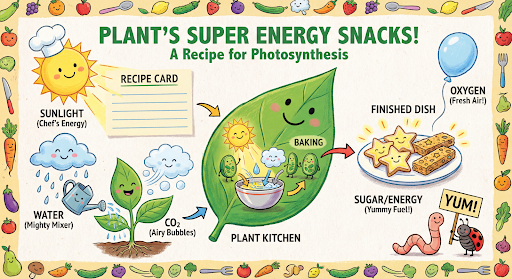

Sigue generando y editando imágenes de forma conversacional. Se recomienda usar el chat o la conversación de varios turnos para iterar imágenes. En el siguiente ejemplo, se muestra una instrucción para generar una infografía sobre la fotosíntesis.

Python

from google import genai

from google.genai import types

client = genai.Client()

chat = client.chats.create(

model="gemini-3.1-flash-image",

config=types.GenerateContentConfig(

response_modalities=['TEXT', 'IMAGE'],

tools=[{"google_search": {}}]

)

)

message = "Create a vibrant infographic that explains photosynthesis as if it were a recipe for a plant's favorite food. Show the \"ingredients\" (sunlight, water, CO2) and the \"finished dish\" (sugar/energy). The style should be like a page from a colorful kids' cookbook, suitable for a 4th grader."

response = chat.send_message(message)

for part in response.parts:

if part.text is not None:

print(part.text)

elif image:= part.as_image():

image.save("photosynthesis.png")

JavaScript

import { GoogleGenAI } from "@google/genai";

const ai = new GoogleGenAI({});

async function main() {

const chat = ai.chats.create({

model: "gemini-3.1-flash-image",

config: {

responseModalities: ['TEXT', 'IMAGE'],

tools: [{googleSearch: {}}],

},

});

}

await main();

const message = "Create a vibrant infographic that explains photosynthesis as if it were a recipe for a plant's favorite food. Show the \"ingredients\" (sunlight, water, CO2) and the \"finished dish\" (sugar/energy). The style should be like a page from a colorful kids' cookbook, suitable for a 4th grader."

let response = await chat.sendMessage({message});

for (const part of response.candidates[0].content.parts) {

if (part.text) {

console.log(part.text);

} else if (part.inlineData) {

const imageData = part.inlineData.data;

const buffer = Buffer.from(imageData, "base64");

fs.writeFileSync("photosynthesis.png", buffer);

console.log("Image saved as photosynthesis.png");

}

}

Go

package main

import (

"context"

"fmt"

"log"

"os"

"google.golang.org/genai"

)

func main() {

ctx := context.Background()

client, err := genai.NewClient(ctx, nil)

if err != nil {

log.Fatal(err)

}

defer client.Close()

model := client.GenerativeModel("gemini-3.1-flash-image")

model.GenerationConfig = &pb.GenerationConfig{

ResponseModalities: []pb.ResponseModality{genai.Text, genai.Image},

}

chat := model.StartChat()

message := "Create a vibrant infographic that explains photosynthesis as if it were a recipe for a plant's favorite food. Show the \"ingredients\" (sunlight, water, CO2) and the \"finished dish\" (sugar/energy). The style should be like a page from a colorful kids' cookbook, suitable for a 4th grader."

resp, err := chat.SendMessage(ctx, genai.Text(message))

if err != nil {

log.Fatal(err)

}

for _, part := range resp.Candidates[0].Content.Parts {

if txt, ok := part.(genai.Text); ok {

fmt.Printf("%s", string(txt))

} else if img, ok := part.(genai.ImageData); ok {

err := os.WriteFile("photosynthesis.png", img.Data, 0644)

if err != nil {

log.Fatal(err)

}

}

}

}

Java

import com.google.genai.Chat;

import com.google.genai.Client;

import com.google.genai.types.Content;

import com.google.genai.types.GenerateContentConfig;

import com.google.genai.types.GenerateContentResponse;

import com.google.genai.types.GoogleSearch;

import com.google.genai.types.ImageConfig;

import com.google.genai.types.Part;

import com.google.genai.types.RetrievalConfig;

import com.google.genai.types.Tool;

import com.google.genai.types.ToolConfig;

import java.io.IOException;

import java.nio.file.Files;

import java.nio.file.Path;

import java.nio.file.Paths;

public class MultiturnImageEditing {

public static void main(String[] args) throws IOException {

try (Client client = new Client()) {

GenerateContentConfig config = GenerateContentConfig.builder()

.responseModalities("TEXT", "IMAGE")

.tools(Tool.builder()

.googleSearch(GoogleSearch.builder().build())

.build())

.build();

Chat chat = client.chats.create("gemini-3.1-flash-image", config);

GenerateContentResponse response = chat.sendMessage("""

Create a vibrant infographic that explains photosynthesis

as if it were a recipe for a plant's favorite food.

Show the "ingredients" (sunlight, water, CO2)

and the "finished dish" (sugar/energy).

The style should be like a page from a colorful

kids' cookbook, suitable for a 4th grader.

""");

for (Part part : response.parts()) {

if (part.text().isPresent()) {

System.out.println(part.text().get());

} else if (part.inlineData().isPresent()) {

var blob = part.inlineData().get();

if (blob.data().isPresent()) {

Files.write(Paths.get("photosynthesis.png"), blob.data().get());

}

}

}

// ...

}

}

}

C#

using Google.GenAI;

using System;

using System.Collections.Generic;

using System.IO;

using System.Threading.Tasks;

public class MultiturnImageEditing {

public static async Task Main(string[] args) {

var client = new Client();

var response = await client.Models.GenerateContentAsync(

model: "gemini-3.1-flash-image",

contents: new List<Part>

{

new Part { Text = "Create a vibrant infographic that explains photosynthesis as if it were a recipe for a plant's favorite food. Show the \"ingredients\" (sunlight, water, CO2) and the \"finished dish\" (sugar/energy). The style should be like a page from a colorful kids' cookbook, suitable for a 4th grader." }

},

config: new GenerateContentConfig

{

ResponseModalities = new List<string> { "TEXT", "IMAGE" },

Tools = new List<Tool> { new Tool { GoogleSearch = new GoogleSearch() } }

}

);

foreach (var candidate in response.Candidates) {

foreach (var part in candidate.Content.Parts) {

if (part.Text != null) {

Console.WriteLine(part.Text);

} else if (part.InlineData != null) {

var imageBytes = Convert.FromBase64String(part.InlineData.Data);

await File.WriteAllBytesAsync("photosynthesis.png", imageBytes);

Console.WriteLine("Image saved as photosynthesis.png");

}

}

}

}

}

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1/models/gemini-3.1-flash-image:generateContent" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"contents": [{

"role": "user",

"parts": [

{"text": "Create a vibrant infographic that explains photosynthesis as if it were a recipe for a plants favorite food. Show the \"ingredients\" (sunlight, water, CO2) and the \"finished dish\" (sugar/energy). The style should be like a page from a colorful kids cookbook, suitable for a 4th grader."}

]

}],

"generationConfig": {

"responseModalities": ["TEXT", "IMAGE"]

}

}'

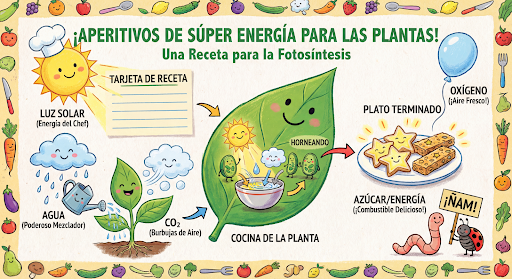

Luego, puedes usar el mismo chat para cambiar el idioma del gráfico al español.

Python

message = "Update this infographic to be in Spanish. Do not change any other elements of the image."

aspect_ratio = "16:9" # "1:1","1:4","1:8","2:3","3:2","3:4","4:1","4:3","4:5","5:4","8:1","9:16","16:9","21:9"

resolution = "2K" # "512", "1K", "2K", "4K"

response = chat.send_message(message,

config=types.GenerateContentConfig(

response_format={"image": {aspect_ratio: aspect_ratio, image_size: resolution}},

))

for part in response.parts:

if part.text is not None:

print(part.text)

elif image:= part.as_image():

image.save("photosynthesis_spanish.png")

JavaScript

const message = 'Update this infographic to be in Spanish. Do not change any other elements of the image.';

const aspectRatio = '16:9';

const resolution = '2K';

let response = await chat.sendMessage({

message,

config: {

responseModalities: ['TEXT', 'IMAGE'],

responseFormat: {

image: {

aspectRatio: aspectRatio,

imageSize: resolution,

}

},

tools: [{googleSearch: {}}],

},

});

for (const part of response.candidates[0].content.parts) {

if (part.text) {

console.log(part.text);

} else if (part.inlineData) {

const imageData = part.inlineData.data;

const buffer = Buffer.from(imageData, "base64");

fs.writeFileSync("photosynthesis2.png", buffer);

console.log("Image saved as photosynthesis2.png");

}

}

Go

message = "Update this infographic to be in Spanish. Do not change any other elements of the image."

aspect_ratio = "16:9" // "1:1","1:4","1:8","2:3","3:2","3:4","4:1","4:3","4:5","5:4","8:1","9:16","16:9","21:9"

resolution = "2K" // "512", "1K", "2K", "4K"

model.GenerationConfig.ImageConfig = &pb.ImageConfig{

AspectRatio: aspect_ratio,

ImageSize: resolution,

}

resp, err = chat.SendMessage(ctx, genai.Text(message))

if err != nil {

log.Fatal(err)

}

for _, part := range resp.Candidates[0].Content.Parts {

if txt, ok := part.(genai.Text); ok {

fmt.Printf("%s", string(txt))

} else if img, ok := part.(genai.ImageData); ok {

err := os.WriteFile("photosynthesis_spanish.png", img.Data, 0644)

if err != nil {

log.Fatal(err)

}

}

}

Java

String aspectRatio = "16:9"; // "1:1","1:4","1:8","2:3","3:2","3:4","4:1","4:3","4:5","5:4","8:1","9:16","16:9","21:9"

String resolution = "2K"; // "512", "1K", "2K", "4K"

config = GenerateContentConfig.builder()

.responseModalities("TEXT", "IMAGE")

.imageConfig(ImageConfig.builder()

.aspectRatio(aspectRatio)

.imageSize(resolution)

.build())

.build();

response = chat.sendMessage(

"Update this infographic to be in Spanish. " +

"Do not change any other elements of the image.",

config);

for (Part part : response.parts()) {

if (part.text().isPresent()) {

System.out.println(part.text().get());

} else if (part.inlineData().isPresent()) {

var blob = part.inlineData().get();

if (blob.data().isPresent()) {

Files.write(Paths.get("photosynthesis_spanish.png"), blob.data().get());

}

}

}

C#

using Google.GenAI;

using System;

using System.Collections.Generic;

using System.IO;

using System.Threading.Tasks;

public class MultiturnImageEditingSpanish {

public static async Task Main(string[] args) {

var client = new Client();

var response = await client.Models.GenerateContentAsync(

model: "gemini-3.1-flash-image",

contents: new List<Part>

{

new Part { Text = "Update this infographic to be in Spanish. Do not change any other elements of the image." }

},

config: new GenerateContentConfig

{

ResponseModalities = new List<string> { "TEXT", "IMAGE" },

ImageConfig = new ImageConfig

{

AspectRatio = "16:9",

ImageSize = "2K"

}

}

);

foreach (var candidate in response.Candidates) {

foreach (var part in candidate.Content.Parts) {

if (part.Text != null) {

Console.WriteLine(part.Text);

} else if (part.InlineData != null) {

var imageBytes = Convert.FromBase64String(part.InlineData.Data);

await File.WriteAllBytesAsync("photosynthesis_spanish.png", imageBytes);

Console.WriteLine("Image saved as photosynthesis_spanish.png");

}

}

}

}

}

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1/models/gemini-3.1-flash-image:generateContent" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"contents": [

{

"role": "user",

"parts": [{"text": "Create a vibrant infographic that explains photosynthesis..."}]

},

{

"role": "model",

"parts": [{"inline_data": {"mime_type": "image/png", "data": "<PREVIOUS_IMAGE_DATA>"}}]

},

{

"role": "user",

"parts": [{"text": "Update this infographic to be in Spanish. Do not change any other elements of the image."}]

}

],

"tools": [{"google_search": {}}],

"generationConfig": {

"responseModalities": ["TEXT", "IMAGE"],

"responseFormat": {

"image": {

"aspectRatio": "16:9",

"imageSize": "2K"

}

}

}

}'

Novedades de los modelos de imagen de Gemini 3

Gemini 3 ofrece modelos de generación y edición de imágenes de vanguardia. Gemini 3.1 Flash Image está optimizado para la velocidad y los casos de uso de gran volumen, y Gemini 3 Pro Image está optimizado para la producción de recursos profesionales. Diseñadas para abordar los flujos de trabajo más desafiantes a través del razonamiento avanzado, se destacan en tareas complejas de creación y modificación de varios turnos.

- Salida de alta resolución: Capacidades de generación integradas para imágenes en 1K, 2K y 4K.

- Gemini 3.1 Flash Image agrega la resolución más pequeña de 512 (0.5K).

- Renderización de texto avanzada: Puede generar texto legible y estilizado para infografías, menús, diagramas y recursos de marketing.

- Fundamentación con la Búsqueda de Google: El modelo puede usar la Búsqueda de Google como herramienta para verificar hechos y generar imágenes basadas en datos en tiempo real (p.ej., mapas del clima actuales, gráficos de acciones, eventos recientes).

- Gemini 3.1 Flash Image agrega la integración de la fundamentación con la Búsqueda de Google para imágenes junto con la Búsqueda web.

- Modo de pensamiento: El modelo utiliza un proceso de "pensamiento" para razonar a través de instrucciones complejas. Genera "imágenes de pensamiento" provisorias (visibles en el backend, pero no se cobran) para definir la composición antes de producir el resultado final de alta calidad.

- Hasta 14 imágenes de referencia: Ahora puedes combinar hasta 14 imágenes de referencia para producir la imagen final.

- Nuevas relaciones de aspecto: Gemini 3.1 Flash Image agrega relaciones de aspecto de 1:4, 4:1, 1:8 y 8:1.

Usa hasta 14 imágenes de referencia

Los modelos de imágenes de Gemini 3 te permiten combinar hasta 14 imágenes de referencia. Estas 14 imágenes pueden incluir lo siguiente:

| Imagen de Gemini 3.1 Flash | Gemini 3.1 Pro Image |

|---|---|

| Hasta 10 imágenes de objetos con alta fidelidad para incluir en la imagen final | Hasta 6 imágenes de objetos con alta fidelidad para incluir en la imagen final |

| Hasta 4 imágenes de personajes para mantener la coherencia de los personajes | Hasta 5 imágenes de personajes para mantener la coherencia de los personajes |

Python

from google import genai

from google.genai import types

from PIL import Image

prompt = "An office group photo of these people, they are making funny faces."

aspect_ratio = "5:4" # "1:1","1:4","1:8","2:3","3:2","3:4","4:1","4:3","4:5","5:4","8:1","9:16","16:9","21:9"

resolution = "2K" # "512", "1K", "2K", "4K"

client = genai.Client()

response = client.models.generate_content(

model="gemini-3.1-flash-image",

contents=[

prompt,

Image.open('person1.png'),

Image.open('person2.png'),

Image.open('person3.png'),

Image.open('person4.png'),

Image.open('person5.png'),

],

config=types.GenerateContentConfig(

response_modalities=['TEXT', 'IMAGE'],

response_format={"image": {aspect_ratio: aspect_ratio, image_size: resolution}},

)

)

for part in response.parts:

if part.text is not None:

print(part.text)

elif image:= part.as_image():

image.save("office.png")

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const prompt =

'An office group photo of these people, they are making funny faces.';

const aspectRatio = '5:4';

const resolution = '2K';

const contents = [

{ text: prompt },

{

inlineData: {

mimeType: "image/jpeg",

data: base64ImageFile1,

},

},

{

inlineData: {

mimeType: "image/jpeg",

data: base64ImageFile2,

},

},

{

inlineData: {

mimeType: "image/jpeg",

data: base64ImageFile3,

},

},

{

inlineData: {

mimeType: "image/jpeg",

data: base64ImageFile4,

},

},

{

inlineData: {

mimeType: "image/jpeg",

data: base64ImageFile5,

},

}

];

const response = await ai.models.generateContent({

model: 'gemini-3.1-flash-image',

contents: contents,

config: {

responseModalities: ['TEXT', 'IMAGE'],

responseFormat: {

image: {

aspectRatio: aspectRatio,

imageSize: resolution,

}

},

},

});

for (const part of response.candidates[0].content.parts) {

if (part.text) {

console.log(part.text);

} else if (part.inlineData) {

const imageData = part.inlineData.data;

const buffer = Buffer.from(imageData, "base64");

fs.writeFileSync("image.png", buffer);

console.log("Image saved as image.png");

}

}

}

main();

Go

package main

import (

"context"

"fmt"

"log"

"os"

"google.golang.org/genai"

)

func main() {

ctx := context.Background()

client, err := genai.NewClient(ctx, nil)

if err != nil {

log.Fatal(err)

}

defer client.Close()

model := client.GenerativeModel("gemini-3.1-flash-image")

model.GenerationConfig = &pb.GenerationConfig{

ResponseModalities: []pb.ResponseModality{genai.Text, genai.Image},

ImageConfig: &pb.ImageConfig{

AspectRatio: "5:4",

ImageSize: "2K",

},

}

img1, err := os.ReadFile("person1.png")

if err != nil { log.Fatal(err) }

img2, err := os.ReadFile("person2.png")

if err != nil { log.Fatal(err) }

img3, err := os.ReadFile("person3.png")

if err != nil { log.Fatal(err) }

img4, err := os.ReadFile("person4.png")

if err != nil { log.Fatal(err) }

img5, err := os.ReadFile("person5.png")

if err != nil { log.Fatal(err) }

parts := []genai.Part{

genai.Text("An office group photo of these people, they are making funny faces."),

genai.ImageData{MIMEType: "image/png", Data: img1},

genai.ImageData{MIMEType: "image/png", Data: img2},

genai.ImageData{MIMEType: "image/png", Data: img3},

genai.ImageData{MIMEType: "image/png", Data: img4},

genai.ImageData{MIMEType: "image/png", Data: img5},

}

resp, err := model.GenerateContent(ctx, parts...)

if err != nil {

log.Fatal(err)

}

for _, part := range resp.Candidates[0].Content.Parts {

if txt, ok := part.(genai.Text); ok {

fmt.Printf("%s", string(txt))

} else if img, ok := part.(genai.ImageData); ok {

err := os.WriteFile("office.png", img.Data, 0644)

if err != nil {

log.Fatal(err)

}

}

}

}

Java

import com.google.genai.Client;

import com.google.genai.types.Content;

import com.google.genai.types.GenerateContentConfig;

import com.google.genai.types.GenerateContentResponse;

import com.google.genai.types.ImageConfig;

import com.google.genai.types.Part;

import java.io.IOException;

import java.nio.file.Files;

import java.nio.file.Path;

import java.nio.file.Paths;

public class GroupPhoto {

public static void main(String[] args) throws IOException {

try (Client client = new Client()) {

GenerateContentConfig config = GenerateContentConfig.builder()

.responseModalities("TEXT", "IMAGE")

.imageConfig(ImageConfig.builder()

.aspectRatio("5:4")

.imageSize("2K")

.build())

.build();

GenerateContentResponse response = client.models.generateContent(

"gemini-3.1-flash-image",

Content.fromParts(

Part.fromText("An office group photo of these people, they are making funny faces."),

Part.fromBytes(Files.readAllBytes(Path.of("person1.png")), "image/png"),

Part.fromBytes(Files.readAllBytes(Path.of("person2.png")), "image/png"),

Part.fromBytes(Files.readAllBytes(Path.of("person3.png")), "image/png"),

Part.fromBytes(Files.readAllBytes(Path.of("person4.png")), "image/png"),

Part.fromBytes(Files.readAllBytes(Path.of("person5.png")), "image/png")

), config);

for (Part part : response.parts()) {

if (part.text().isPresent()) {

System.out.println(part.text().get());

} else if (part.inlineData().isPresent()) {

var blob = part.inlineData().get();

if (blob.data().isPresent()) {

Files.write(Paths.get("office.png"), blob.data().get());

}

}

}

}

}

}

C#

using Google.GenAI;

using System;

using System.Collections.Generic;

using System.IO;

using System.Threading.Tasks;

public class GroupPhoto {

public static async Task Main(string[] args) {

var client = new Client();

var response = await client.Models.GenerateContentAsync(

model: "gemini-3.1-flash-image",

contents: new List<Part>

{

new Part { Text = "An office group photo of these people, they are making funny faces." },

new Part { FileData = new FileData { FileUri = "file:///person1.png" } },

new Part { FileData = new FileData { FileUri = "file:///person2.png" } },

new Part { FileData = new FileData { FileUri = "file:///person3.png" } },

new Part { FileData = new FileData { FileUri = "file:///person4.png" } },

new Part { FileData = new FileData { FileUri = "file:///person5.png" } }

},

config: new GenerateContentConfig

{

ResponseModalities = new List<string> { "TEXT", "IMAGE" },

ImageConfig = new ImageConfig

{

AspectRatio = "5:4",

ImageSize = "2K"

}

}

);

foreach (var candidate in response.Candidates) {

foreach (var part in candidate.Content.Parts) {

if (part.Text != null) {

Console.WriteLine(part.Text);

} else if (part.InlineData != null) {

var imageBytes = Convert.FromBase64String(part.InlineData.Data);

await File.WriteAllBytesAsync("office.png", imageBytes);

Console.WriteLine("Image saved as office.png");

}

}

}

}

}

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1/models/gemini-3.1-flash-image:generateContent" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H 'Content-Type: application/json' \

-d "{

\"contents\": [{

\"parts\":[

{\"text\": \"An office group photo of these people, they are making funny faces.\"},

{\"inline_data\": {\"mime_type\":\"image/png\", \"data\": \"<BASE64_DATA_IMG_1>\"}},

{\"inline_data\": {\"mime_type\":\"image/png\", \"data\": \"<BASE64_DATA_IMG_2>\"}},

{\"inline_data\": {\"mime_type\":\"image/png\", \"data\": \"<BASE64_DATA_IMG_3>\"}},

{\"inline_data\": {\"mime_type\":\"image/png\", \"data\": \"<BASE64_DATA_IMG_4>\"}},

{\"inline_data\": {\"mime_type\":\"image/png\", \"data\": \"<BASE64_DATA_IMG_5>\"}}

]

}],

\"generationConfig\": {

\"responseModalities\": [\"TEXT\", \"IMAGE\"],

\"responseFormat\": {

\"image\": {

\"aspectRatio\": \"5:4\",

\"imageSize\": \"2K\"

}

}

}

}"

Fundamentación con la Búsqueda de Google

Usa la herramienta de Búsqueda de Google para generar imágenes basadas en información en tiempo real, como pronósticos del clima, gráficos de acciones o eventos recientes.

Ten en cuenta que, cuando se usa la fundamentación con la Búsqueda de Google para la generación de imágenes, los resultados de la búsqueda basados en imágenes no se pasan al modelo de generación y se excluyen de la respuesta (consulta Fundamentación con la Búsqueda de Google para imágenes).

Python

from google import genai

prompt = "Visualize the current weather forecast for the next 5 days in San Francisco as a clean, modern weather chart. Add a visual on what I should wear each day"

aspect_ratio = "16:9" # "1:1","1:4","1:8","2:3","3:2","3:4","4:1","4:3","4:5","5:4","8:1","9:16","16:9","21:9"

client = genai.Client()

response = client.models.generate_content(

model="gemini-3.1-flash-image",

contents=prompt,

config=types.GenerateContentConfig(

response_modalities=['Text', 'Image'],

response_format={"image": {aspect_ratio: aspect_ratio,}},

tools=[{"google_search": {}}]

)

)

for part in response.parts:

if part.text is not None:

print(part.text)

elif image:= part.as_image():

image.save("weather.png")

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const prompt = 'Visualize the current weather forecast for the next 5 days in San Francisco as a clean, modern weather chart. Add a visual on what I should wear each day';

const aspectRatio = '16:9';

const resolution = '2K';

const response = await ai.models.generateContent({

model: 'gemini-3.1-flash-image',

contents: prompt,

config: {

responseModalities: ['TEXT', 'IMAGE'],

responseFormat: {

image: {

aspectRatio: aspectRatio,

imageSize: resolution,

}

},

tools: [{ googleSearch: {} }]

},

});

for (const part of response.candidates[0].content.parts) {

if (part.text) {

console.log(part.text);

} else if (part.inlineData) {

const imageData = part.inlineData.data;

const buffer = Buffer.from(imageData, "base64");

fs.writeFileSync("image.png", buffer);

console.log("Image saved as image.png");

}

}

}

main();

Java

import com.google.genai.Client;

import com.google.genai.types.GenerateContentConfig;

import com.google.genai.types.GenerateContentResponse;

import com.google.genai.types.GoogleSearch;

import com.google.genai.types.ImageConfig;

import com.google.genai.types.Part;

import com.google.genai.types.Tool;

import java.io.IOException;

import java.nio.file.Files;

import java.nio.file.Paths;

public class SearchGrounding {

public static void main(String[] args) throws IOException {

try (Client client = new Client()) {

GenerateContentConfig config = GenerateContentConfig.builder()

.responseModalities("TEXT", "IMAGE")

.imageConfig(ImageConfig.builder()

.aspectRatio("16:9")

.build())

.tools(Tool.builder()

.googleSearch(GoogleSearch.builder().build())

.build())

.build();

GenerateContentResponse response = client.models.generateContent(

"gemini-3.1-flash-image", """

Visualize the current weather forecast for the next 5 days

in San Francisco as a clean, modern weather chart.

Add a visual on what I should wear each day

""",

config);

for (Part part : response.parts()) {

if (part.text().isPresent()) {

System.out.println(part.text().get());

} else if (part.inlineData().isPresent()) {

var blob = part.inlineData().get();

if (blob.data().isPresent()) {

Files.write(Paths.get("weather.png"), blob.data().get());

}

}

}

}

}

}

C#

using Google.GenAI;

using System;

using System.Collections.Generic;

using System.IO;

using System.Threading.Tasks;

public class SearchGrounding {

public static async Task Main(string[] args) {

var client = new Client();

var response = await client.Models.GenerateContentAsync(

model: "gemini-3.1-flash-image",

contents: new List<Part>

{

new Part { Text = "Visualize the current weather forecast for the next 5 days in San Francisco as a clean, modern weather chart. Add a visual on what I should wear each day" }

},

config: new GenerateContentConfig

{

ResponseModalities = new List<string> { "TEXT", "IMAGE" },

ImageConfig = new ImageConfig

{

AspectRatio = "16:9"

},

Tools = new List<Tool> { new Tool { GoogleSearch = new GoogleSearch() } }

}

);

foreach (var candidate in response.Candidates) {

foreach (var part in candidate.Content.Parts) {

if (part.Text != null) {

Console.WriteLine(part.Text);

} else if (part.InlineData != null) {

var imageBytes = Convert.FromBase64String(part.InlineData.Data);

await File.WriteAllBytesAsync("weather.png", imageBytes);

Console.WriteLine("Image saved as weather.png");

}

}

}

}

}

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1/models/gemini-3.1-flash-image:generateContent" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"contents": [{"parts": [{"text": "Visualize the current weather forecast for the next 5 days in San Francisco as a clean, modern weather chart. Add a visual on what I should wear each day"}]}],

"tools": [{"google_search": {}}],

"generationConfig": {

"responseModalities": ["TEXT", "IMAGE"],

"responseFormat": {

"image": {"aspectRatio": "16:9"}

}

}

}'

La respuesta incluye groundingMetadata, que contiene los siguientes campos obligatorios:

searchEntryPoint: Contiene el código HTML y CSS para renderizar las sugerencias de búsqueda requeridas.groundingChunks: Muestra las 3 principales fuentes web que se usaron para fundamentar la imagen generada.

Fundamentación con la Búsqueda de Google para imágenes (3.1 Flash)

La fundamentación con la Búsqueda de Google para imágenes permite que los modelos usen imágenes web recuperadas a través de la Búsqueda de Google como contexto visual para la generación de imágenes. La Búsqueda de imágenes es un nuevo tipo de búsqueda dentro de la herramienta existente Fundamentación con la Búsqueda de Google, que funciona junto con la Búsqueda web estándar.

Para habilitar la Búsqueda de imágenes, configura la herramienta googleSearch en tu solicitud a la API y especifica imageSearch dentro del objeto searchTypes. La Búsqueda con imágenes se puede usar de forma independiente o junto con la Búsqueda web.

Ten en cuenta que la función de fundamentación con la Búsqueda de Google para imágenes no se puede usar para buscar personas.

Python

from google import genai

prompt = "A detailed painting of a Timareta butterfly resting on a flower"

client = genai.Client()

response = client.models.generate_content(

model="gemini-3.1-flash-image",

contents=prompt,

config=types.GenerateContentConfig(

response_modalities=["IMAGE"],

tools=[

types.Tool(google_search=types.GoogleSearch(

search_types=types.SearchTypes(

web_search=types.WebSearch(),

image_search=types.ImageSearch()

)

))

]

)

)

# Display grounding sources if available

if response.candidates and response.candidates[0].grounding_metadata and response.candidates[0].grounding_metadata.search_entry_point:

display(HTML(response.candidates[0].grounding_metadata.search_entry_point.rendered_content))

JavaScript

import { GoogleGenAI } from "@google/genai";

async function main() {

const ai = new GoogleGenAI({});

const prompt = "A detailed painting of a Timareta butterfly resting on a flower";

const response = await ai.models.generateContent({

model: "gemini-3.1-flash-image",

contents: prompt,

config: {

responseModalities: ["IMAGE"],

tools: [

{

googleSearch: {

searchTypes: {

webSearch: {},

imageSearch: {}

}

}

}

]

}

});

// Display grounding sources if available

if (response.candidates && response.candidates[0].groundingMetadata && response.candidates[0].groundingMetadata.searchEntryPoint) {

console.log(response.candidates[0].groundingMetadata.searchEntryPoint.renderedContent);

}

}

main();

Go

package main

import (

"context"

"fmt"

"log"

"google.golang.org/genai"

pb "google.golang.org/genai/schema"

)

func main() {

ctx := context.Background()

client, err := genai.NewClient(ctx, nil)

if err != nil {

log.Fatal(err)

}

defer client.Close()

model := client.GenerativeModel("gemini-3.1-flash-image")

model.Tools = []*pb.Tool{

{

GoogleSearch: &pb.GoogleSearch{

SearchTypes: &pb.SearchTypes{

WebSearch: &pb.WebSearch{},

ImageSearch: &pb.ImageSearch{},

},

},

},

}

model.GenerationConfig = &pb.GenerationConfig{

ResponseModalities: []pb.ResponseModality{genai.Image},

}

prompt := "A detailed painting of a Timareta butterfly resting on a flower"

resp, err := model.GenerateContent(ctx, genai.Text(prompt))

if err != nil {

log.Fatal(err)

}

if resp.Candidates[0].GroundingMetadata != nil && resp.Candidates[0].GroundingMetadata.SearchEntryPoint != nil {

fmt.Println(resp.Candidates[0].GroundingMetadata.SearchEntryPoint.RenderedContent)

}

}

Java

import com.google.genai.Client;

import com.google.genai.types.GenerateContentConfig;

import com.google.genai.types.GenerateContentResponse;

import com.google.genai.types.GoogleSearch;

import com.google.genai.types.ImageSearch;

import com.google.genai.types.SearchTypes;

import com.google.genai.types.Tool;

import com.google.genai.types.WebSearch;

import java.io.IOException;

public class ImageSearchGrounding {

public static void main(String[] args) throws IOException {

try (Client client = new Client()) {

GenerateContentConfig config = GenerateContentConfig.builder()

.responseModalities("IMAGE")

.tools(Tool.builder()

.googleSearch(GoogleSearch.builder()

.searchTypes(SearchTypes.builder()

.webSearch(WebSearch.builder().build())

.imageSearch(ImageSearch.builder().build())

.build())

.build())

.build())

.build();

GenerateContentResponse response = client.models.generateContent(

"gemini-3.1-flash-image",

"A detailed painting of a Timareta butterfly resting on a flower",

config);

if (response.candidates().isPresent() && !response.candidates().get().isEmpty()) {

var candidate = response.candidates().get().get(0);

if (candidate.groundingMetadata().isPresent() && candidate.groundingMetadata().get().searchEntryPoint().isPresent()) {

System.out.println(candidate.groundingMetadata().get().searchEntryPoint().get().renderedContent().orElse(""));

}

}

}

}

}

C#

using Google.GenAI;

using System;

using System.Collections.Generic;

using System.Threading.Tasks;

public class ImageSearchGrounding {

public static async Task Main(string[] args) {

var client = new Client();

var response = await client.Models.GenerateContentAsync(

model: "gemini-3.1-flash-image",

contents: new List<Part>

{

new Part { Text = "A detailed painting of a Timareta butterfly resting on a flower" }

},

config: new GenerateContentConfig

{

ResponseModalities = new List<string> { "IMAGE" },

Tools = new List<Tool>

{

new Tool

{

GoogleSearch = new GoogleSearch

{

SearchTypes = new SearchTypes

{

WebSearch = new WebSearch(),

ImageSearch = new ImageSearch()

}

}

}

}

}

);

foreach (var candidate in response.Candidates) {

if (candidate.GroundingMetadata != null && candidate.GroundingMetadata.SearchEntryPoint != null) {

Console.WriteLine(candidate.GroundingMetadata.SearchEntryPoint.RenderedContent);

}

}

}

}

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1/models/gemini-3.1-flash-image:generateContent" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"contents": [{"parts": [{"text": "A detailed painting of a Timareta butterfly resting on a flower"}]}],

"tools": [{"google_search": {"searchTypes": {"webSearch": {}, "imageSearch": {}}}}],

"generationConfig": {

"responseModalities": ["IMAGE"]

}

}'

Requisitos de visualización

Cuando uses la Búsqueda con imágenes en Fundamentación con la Búsqueda de Google, debes cumplir con las siguientes condiciones:

- Atribución de la fuente: Debes proporcionar un vínculo a la página web que contiene la imagen fuente (la "página contenedora", no el archivo de imagen en sí) de manera que el usuario la reconozca como un vínculo.

- Navegación directa: Si también eliges mostrar las imágenes de origen, debes proporcionar una ruta directa con un solo clic desde las imágenes de origen hasta la página web de origen que las contiene. No se permite ninguna otra implementación que retrase o abstraiga el acceso del usuario final a la página web de origen, incluido, sin limitaciones, cualquier ruta de varios clics o el uso de un visor de imágenes intermedio.

Respuesta

En el caso de las respuestas fundamentadas que usan la búsqueda con imágenes, la API proporciona una atribución y metadatos claros para vincular su resultado a fuentes verificadas. Entre los campos clave del objeto groundingMetadata, se incluyen los siguientes:

imageSearchQueries: Son las búsquedas específicas que usa el modelo para el contexto visual (búsqueda con imágenes).groundingChunks: Contiene información de la fuente para los resultados recuperados. En el caso de las fuentes de imágenes, se devolverán como URLs de redireccionamiento con un nuevo tipo de fragmento de imagen. Este fragmento incluye lo siguiente:uri: Es la URL de la página web para la atribución (la página de destino).image_uri: Es la URL directa de la imagen.

groundingSupports: Proporciona asignaciones específicas que vinculan el contenido generado a su fuente de cita pertinente en los fragmentos.searchEntryPoint: Incluye el chip "Búsqueda de Google" que contiene código HTML y CSS que cumplen con los requisitos para renderizar las sugerencias de búsqueda.

Generación de video a imagen (3.1 Flash)

La generación de imágenes a partir de videos te permite crear imágenes nuevas usando el contexto de un video como referencia multimodal. Esto es útil para crear miniaturas de video de alta calidad, pósteres cinematográficos, infografías de resumen o ilustraciones nuevas inspiradas en una escena de video.

Durante la generación, el modelo analiza los fotogramas del video en contexto (hasta el límite de tokens de entrada del modelo, que es de 131,072) para extraer temas visuales y eventos clave, y luego los usa junto con tu instrucción de texto para sintetizar la imagen de salida.

Puedes pasar URLs de YouTube públicas directamente en tu solicitud a la API o subir archivos de video locales con la API de Files.

Python

from google import genai

from google.genai import types

client = genai.Client()

# Pass a public YouTube video URL as part of the contents

response = client.models.generate_content(

model="gemini-3.1-flash-image",

contents=[

types.Part(

file_data=types.FileData(file_uri="https://www.youtube.com/watch?v=UTdfxFyOQTI"),

video_metadata=types.VideoMetadata(fps=0.5)

),

"Can you create an infographics that explains what this video is about?"

]

)

# Save the generated image part

for part in response.parts:

if part.inline_data is not None:

image = part.as_image()

image.save("video_poster.png")

print("Image saved as video_poster.png")

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const response = await ai.models.generateContent({

model: "gemini-3.1-flash-image",

contents: [

{

fileData: {

fileUri: "https://www.youtube.com/watch?v=UTdfxFyOQTI",

},

videoMetadata: {

fps: 0.5

}

},

{ text: "Can you create an infographics that explains what this video is about?" }

]

});

for (const part of response.candidates[0].content.parts) {

if (part.inlineData) {

const imageData = part.inlineData.data;

const buffer = Buffer.from(imageData, "base64");

fs.writeFileSync("video_poster.png", buffer);

console.log("Image saved as video_poster.png");

}

}

}

main();

Go

package main

import (

"context"

"log"

"os"

"google.golang.org/genai"

)

func main() {

ctx := context.Background()

client, err := genai.NewClient(ctx, nil)

if err != nil {

log.Fatal(err)

}

videoPart := genai.NewPartFromURI("https://www.youtube.com/watch?v=UTdfxFyOQTI", "video/mp4")

videoPart.VideoMetadata = &genai.VideoMetadata{FPS: genai.Ptr(0.5)}

parts := []*genai.Part{

videoPart,

genai.NewPartFromText("Can you create an infographics that explains what this video is about?"),

}

contents := []*genai.Content{

genai.NewContentFromParts(parts, genai.RoleUser),

}

result, err := client.Models.GenerateContent(

ctx,

"gemini-3.1-flash-image",

contents,

nil,

)

if err != nil {

log.Fatal(err)

}

for _, part := range result.Candidates[0].Content.Parts {

if part.InlineData != nil {

imageBytes := part.InlineData.Data

_ = os.WriteFile("video_poster.png", imageBytes, 0644)

log.Println("Image saved as video_poster.png")

}

}

}

Java

import com.google.genai.Client;

import com.google.genai.types.Content;

import com.google.genai.types.FileData;

import com.google.genai.types.GenerateContentResponse;

import com.google.genai.types.Part;

import com.google.genai.types.VideoMetadata;

import com.google.common.collect.ImmutableList;

import java.io.IOException;

import java.nio.file.Files;

import java.nio.file.Paths;

public class VideoToImage {

public static void main(String[] args) throws IOException {

try (Client client = new Client()) {

Part videoPart = Part.builder()

.fileData(FileData.builder()

.fileUri("https://www.youtube.com/watch?v=UTdfxFyOQTI")

.build())

.videoMetadata(VideoMetadata.builder()

.fps(0.5)

.build())

.build();

Part textPart = Part.builder()

.text("Can you create an infographics that explains what this video is about?")

.build();

GenerateContentResponse response = client.models.generateContent(

"gemini-3.1-flash-image",

Content.builder()

.role("user")

.parts(ImmutableList.of(videoPart, textPart))

.build());

for (Part part : response.parts()) {

if (part.inlineData().isPresent()) {

var blob = part.inlineData().get();

if (blob.data().isPresent()) {

Files.write(Paths.get("video_poster.png"), blob.data().get());

System.out.println("Image saved as video_poster.png");

}

}

}

}

}

}

C#

using Google.GenAI;

using Google.GenAI.Types;

using System;

using System.Collections.Generic;

using System.IO;

using System.Threading.Tasks;

public class VideoToImage {

public static async Task Main(string[] args) {

var client = new Client();

var response = await client.Models.GenerateContentAsync(

model: "gemini-3.1-flash-image",

contents: new List<Part>

{

new Part

{

FileData = new FileData { FileUri = "https://www.youtube.com/watch?v=UTdfxFyOQTI" },

VideoMetadata = new VideoMetadata { Fps = 0.5 }

},

new Part { Text = "Can you create an infographics that explains what this video is about?" }

}

);

foreach (var candidate in response.Candidates) {

foreach (var part in candidate.Content.Parts) {

if (part.InlineData != null) {

var imageBytes = Convert.FromBase64String(part.InlineData.Data);

await File.WriteAllBytesAsync("video_poster.png", imageBytes);

Console.WriteLine("Image saved as video_poster.png");

}

}

}

}

}

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1/models/gemini-3.1-flash-image:generateContent" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"contents": [{

"parts": [

{

"file_data": {

"file_uri": "https://www.youtube.com/watch?v=UTdfxFyOQTI"

},

"video_metadata": {

"fps": 0.5

}

},

{"text": "Can you create an infographics that explains what this video is about?"}

]

}]

}'

Genera imágenes con una resolución de hasta 4K

Los modelos de imagen de Gemini 3 generan imágenes de 1 K de forma predeterminada, pero también pueden generar imágenes de 2 K, 4 K y 512 (0.5 K) (solo Gemini 3.1 Flash Image). Para generar recursos de mayor resolución, especifica image_size en generation_config.

Debes usar una "K" en mayúscula (p.ej., 1K, 2K, 4K). El valor 512 no usa un sufijo "K". Se rechazarán los parámetros en minúsculas (p.ej., 1k).

Python

from google import genai

from google.genai import types

prompt = "Da Vinci style anatomical sketch of a dissected Monarch butterfly. Detailed drawings of the head, wings, and legs on textured parchment with notes in English."

aspect_ratio = "1:1" # "1:1","1:4","1:8","2:3","3:2","3:4","4:1","4:3","4:5","5:4","8:1","9:16","16:9","21:9"

resolution = "1K" # "512", "1K", "2K", "4K"

client = genai.Client()

response = client.models.generate_content(

model="gemini-3.1-flash-image",

contents=prompt,

config=types.GenerateContentConfig(

response_modalities=['TEXT', 'IMAGE'],

response_format={"image": {aspect_ratio: aspect_ratio, image_size: resolution}},

)

)

for part in response.parts:

if part.text is not None:

print(part.text)

elif image:= part.as_image():

image.save("butterfly.png")

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const prompt =

'Da Vinci style anatomical sketch of a dissected Monarch butterfly. Detailed drawings of the head, wings, and legs on textured parchment with notes in English.';

const aspectRatio = '1:1';

const resolution = '1K';

const response = await ai.models.generateContent({

model: 'gemini-3.1-flash-image',

contents: prompt,

config: {

responseModalities: ['TEXT', 'IMAGE'],

responseFormat: {

image: {

aspectRatio: aspectRatio,

imageSize: resolution,

}

},

},

});

for (const part of response.candidates[0].content.parts) {

if (part.text) {

console.log(part.text);

} else if (part.inlineData) {

const imageData = part.inlineData.data;

const buffer = Buffer.from(imageData, "base64");

fs.writeFileSync("image.png", buffer);

console.log("Image saved as image.png");

}

}

}

main();

Go

package main

import (

"context"

"fmt"

"log"

"os"

"google.golang.org/genai"

)

func main() {

ctx := context.Background()

client, err := genai.NewClient(ctx, nil)

if err != nil {

log.Fatal(err)

}

defer client.Close()

model := client.GenerativeModel("gemini-3.1-flash-image")

model.GenerationConfig = &pb.GenerationConfig{

ResponseModalities: []pb.ResponseModality{genai.Text, genai.Image},

ImageConfig: &pb.ImageConfig{

AspectRatio: "1:1",

ImageSize: "1K",

},

}

prompt := "Da Vinci style anatomical sketch of a dissected Monarch butterfly. Detailed drawings of the head, wings, and legs on textured parchment with notes in English."

resp, err := model.GenerateContent(ctx, genai.Text(prompt))

if err != nil {

log.Fatal(err)

}

for _, part := range resp.Candidates[0].Content.Parts {

if txt, ok := part.(genai.Text); ok {

fmt.Printf("%s", string(txt))

} else if img, ok := part.(genai.ImageData); ok {

err := os.WriteFile("butterfly.png", img.Data, 0644)

if err != nil {

log.Fatal(err)

}

}

}

}

Java

import com.google.genai.Client;

import com.google.genai.types.GenerateContentConfig;

import com.google.genai.types.GenerateContentResponse;

import com.google.genai.types.GoogleSearch;

import com.google.genai.types.ImageConfig;

import com.google.genai.types.Part;

import com.google.genai.types.Tool;

import java.io.IOException;

import java.nio.file.Files;

import java.nio.file.Paths;

public class HiRes {

public static void main(String[] args) throws IOException {

try (Client client = new Client()) {

GenerateContentConfig config = GenerateContentConfig.builder()

.responseModalities("TEXT", "IMAGE")

.imageConfig(ImageConfig.builder()

.aspectRatio("16:9")

.imageSize("4K")

.build())

.build();

GenerateContentResponse response = client.models.generateContent(

"gemini-3.1-flash-image", """

Da Vinci style anatomical sketch of a dissected Monarch butterfly.

Detailed drawings of the head, wings, and legs on textured

parchment with notes in English.

""",

config);

for (Part part : response.parts()) {

if (part.text().isPresent()) {

System.out.println(part.text().get());

} else if (part.inlineData().isPresent()) {

var blob = part.inlineData().get();

if (blob.data().isPresent()) {

Files.write(Paths.get("butterfly.png"), blob.data().get());

}

}

}

}

}

}

C#

using Google.GenAI;

using System;

using System.Collections.Generic;

using System.IO;

using System.Threading.Tasks;

public class HiRes {

public static async Task Main(string[] args) {

var client = new Client();

var response = await client.Models.GenerateContentAsync(

model: "gemini-3.1-flash-image",

contents: new List<Part>

{

new Part { Text = "Da Vinci style anatomical sketch of a dissected Monarch butterfly. Detailed drawings of the head, wings, and legs on textured parchment with notes in English." }

},

config: new GenerateContentConfig

{

ResponseModalities = new List<string> { "TEXT", "IMAGE" },

ImageConfig = new ImageConfig

{

AspectRatio = "1:1",

ImageSize = "1K"

}

}

);

foreach (var candidate in response.Candidates) {

foreach (var part in candidate.Content.Parts) {

if (part.Text != null) {

Console.WriteLine(part.Text);

} else if (part.InlineData != null) {

var imageBytes = Convert.FromBase64String(part.InlineData.Data);

await File.WriteAllBytesAsync("butterfly.png", imageBytes);

Console.WriteLine("Image saved as butterfly.png");

}

}

}

}

}

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1/models/gemini-3.1-flash-image:generateContent" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"contents": [{"parts": [{"text": "Da Vinci style anatomical sketch of a dissected Monarch butterfly. Detailed drawings of the head, wings, and legs on textured parchment with notes in English."}]}],

"tools": [{"google_search": {}}],

"generationConfig": {

"responseModalities": ["TEXT", "IMAGE"],

"responseFormat": {

"image": {"aspectRatio": "1:1", "imageSize": "1K"}

}

}

}'

La siguiente es una imagen de ejemplo generada a partir de esta instrucción:

Proceso de pensamiento

Los modelos de imágenes de Gemini 3 son modelos de razonamiento que usan un proceso de razonamiento ("Pensar") para instrucciones complejas. Esta función está habilitada de forma predeterminada y no se puede inhabilitar en la API. Para obtener más información sobre el proceso de pensamiento, consulta la guía Gemini Thinking.

El modelo genera hasta dos imágenes provisionales para probar la composición y la lógica. La última imagen dentro de Thinking también es la imagen renderizada final.

Puedes consultar las ideas que llevaron a la producción de la imagen final.

Python

for part in response.parts:

if part.thought:

if part.text:

print(part.text)

elif image:= part.as_image():

image.show()

JavaScript

for (const part of response.candidates[0].content.parts) {

if (part.thought) {

if (part.text) {

console.log(part.text);

} else if (part.inlineData) {

const imageData = part.inlineData.data;

const buffer = Buffer.from(imageData, 'base64');

fs.writeFileSync('image.png', buffer);

console.log('Image saved as image.png');

}

}

}

Java

for (Part part : response.parts()) {

if (part.thought().orElse(false)) {

if (part.text().isPresent()) {

System.out.println(part.text().get());

} else if (part.inlineData().isPresent()) {

var blob = part.inlineData().get();

if (blob.data().isPresent()) {

Files.write(Paths.get("image.png"), blob.data().get());

System.out.println("Image saved as image.png");

}

}

}

}

C#

foreach (var candidate in response.Candidates) {

foreach (var part in candidate.Content.Parts) {

if (part.Thought) {

if (part.Text != null) {

Console.WriteLine(part.Text);

} else if (part.InlineData != null) {

var imageBytes = Convert.FromBase64String(part.InlineData.Data);

await File.WriteAllBytesAsync("image.png", imageBytes);

Console.WriteLine("Image saved as image.png");

}

}

}

}

Cómo controlar los niveles de pensamiento

Con Gemini 3.1 Flash Image, puedes controlar la cantidad de razonamiento que usa el modelo para equilibrar la calidad y la latencia. El valor predeterminado de thinkingLevel es minimal, y los niveles admitidos son minimal y high. Si se configura thinkingLevel como minimal, se proporcionan las respuestas con la latencia más baja. Ten en cuenta que el pensamiento mínimo no significa que el modelo no piense en absoluto.

Puedes agregar el valor booleano includeThoughts para determinar si los pensamientos generados por el modelo se devuelven en la respuesta o permanecen ocultos.

Python

from google import genai

response = client.models.generate_content(

model="gemini-3.1-flash-image",

contents="A futuristic city built inside a giant glass bottle floating in space",

config=types.GenerateContentConfig(

response_modalities=["IMAGE"],

thinking_config=types.ThinkingConfig(

thinking_level="High",

include_thoughts=True

),

)

)

for part in response.parts:

if part.thought: # Skip outputting thoughts

continue

if part.text:

display(Markdown(part.text))

elif image:= part.as_image():

image.show()

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const response = await ai.models.generateContent({

model: "gemini-3.1-flash-image",

contents: "A futuristic city built inside a giant glass bottle floating in space",

config: {

responseModalities: ["IMAGE"],

thinkingConfig: {

thinkingLevel: "High",

includeThoughts: true

},

},

});

for (const part of response.candidates[0].content.parts) {

if (part.thought) { // Skip outputting thoughts

continue;

}

if (part.text) {

console.log(part.text);

} else if (part.inlineData) {

const imageData = part.inlineData.data;

const buffer = Buffer.from(imageData, "base64");

fs.writeFileSync("image.png", buffer);

console.log("Image saved as image.png");

}

}

}

main();

Go

package main

import (

"context"

"fmt"

"log"

"os"

"google.golang.org/genai"

pb "google.golang.org/genai/schema"

)

func main() {

ctx := context.Background()

client, err := genai.NewClient(ctx, nil)

if err != nil {

log.Fatal(err)

}

defer client.Close()

model := client.GenerativeModel("gemini-3.1-flash-image")

model.GenerationConfig = &pb.GenerationConfig{

ResponseModalities: []pb.ResponseModality{genai.Image},

ThinkingConfig: &pb.ThinkingConfig{

ThinkingLevel: "High",

IncludeThoughts: true,

},

}

prompt := "A futuristic city built inside a giant glass bottle floating in space"

resp, err := model.GenerateContent(ctx, genai.Text(prompt))

if err != nil {

log.Fatal(err)

}

for _, part := range resp.Candidates[0].Content.Parts {

if part.Thought { // Skip outputting thoughts

continue

}

if txt, ok := part.(genai.Text); ok {

fmt.Printf("%s", string(txt))

} else if img, ok := part.(genai.ImageData); ok {

err := os.WriteFile("image.png", img.Data, 0644)

if err != nil {

log.Fatal(err)

}

}

}

}

Java

import com.google.genai.Client;

import com.google.genai.types.GenerateContentConfig;

import com.google.genai.types.GenerateContentResponse;

import com.google.genai.types.Part;

import com.google.genai.types.ThinkingConfig;

import java.io.IOException;

import java.nio.file.Files;

import java.nio.file.Paths;

public class ThinkingLevels {

public static void main(String[] args) throws IOException {

try (Client client = new Client()) {

GenerateContentConfig config = GenerateContentConfig.builder()

.responseModalities("IMAGE")

.thinkingConfig(ThinkingConfig.builder()

.thinkingLevel("High")

.includeThoughts(true)

.build())

.build();

GenerateContentResponse response = client.models.generateContent(

"gemini-3.1-flash-image",

"A futuristic city built inside a giant glass bottle floating in space",

config);

for (Part part : response.parts()) {

if (part.thought().orElse(false)) {

// Skip outputting thoughts

continue;

}

if (part.text().isPresent()) {

System.out.println(part.text().get());

} else if (part.inlineData().isPresent()) {

var blob = part.inlineData().get();

if (blob.data().isPresent()) {

Files.write(Paths.get("image.png"), blob.data().get());

System.out.println("Image saved as image.png");

}

}

}

}

}

}

C#

using Google.GenAI;

using System;

using System.Collections.Generic;

using System.IO;

using System.Threading.Tasks;

public class ThinkingLevels {

public static async Task Main(string[] args) {

var client = new Client();

var response = await client.Models.GenerateContentAsync(

model: "gemini-3.1-flash-image",

contents: new List<Part>

{

new Part { Text = "A futuristic city built inside a giant glass bottle floating in space" }

},

config: new GenerateContentConfig

{

ResponseModalities = new List<string> { "IMAGE" },

ThinkingConfig = new ThinkingConfig

{

ThinkingLevel = "High",

IncludeThoughts = true

}

}

);

foreach (var candidate in response.Candidates) {

foreach (var part in candidate.Content.Parts) {

if (part.Thought) {

// Skip outputting thoughts

continue;

}

if (part.Text != null) {

Console.WriteLine(part.Text);

} else if (part.InlineData != null) {

var imageBytes = Convert.FromBase64String(part.InlineData.Data);

await File.WriteAllBytesAsync("image.png", imageBytes);

Console.WriteLine("Image saved as image.png");

}

}

}

}

}

REST

curl -s -X POST \