|

|

Run in Google Colab Run in Google Colab

|

|

|

View source on GitHub View source on GitHub

|

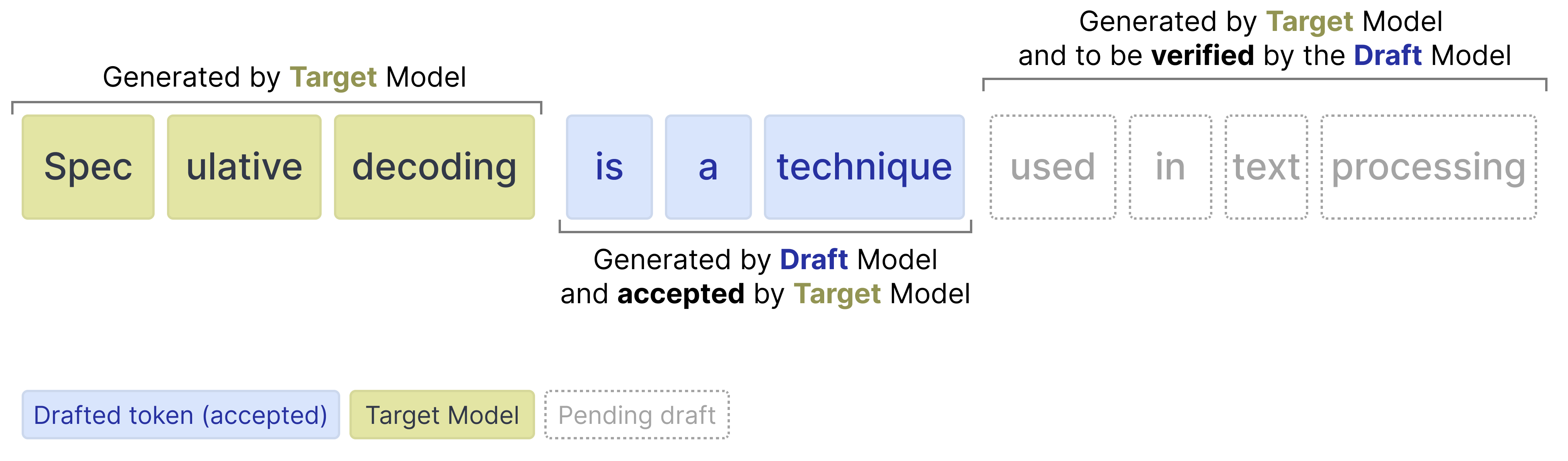

To improve the inference speed of the Gemma 4 models, a new series of autoregressive “drafter” models has been released alongside the main lineup. Instead of solely relying on the primary Gemma 4 models (referred to as the “target” models), the draft model predicts several tokens autoregressively in the time it takes the target model to process just one. This technique is also known as speculative decoding.

After the drafter has predicted multiple draft tokens, the target model now only has to verify those suggested draft tokens. The verification is done in parallel thereby drastically speeding up inference. It reduces the number of forward passes the target model has to do for each token. Because our drafter generates a sequence of tokens for verification, we refer to it as the Multi-Token Prediction (MTP) head.

The draft models released for the Gemma 4 family are small and introduce several enhancements to improve the quality of drafted tokens and to further speed up inference, like using the target model activations and KV-cache to get better predictions.

These enhancements result in significant decoding speedups while guaranteeing similar quality, making these checkpoints perfect for low-latency and on-device applications.

Install Python packages

Install the Hugging Face libraries required for running the Gemma 4 and Gemma 4 assistant model.

# Install PyTorch & other librariespip install torch accelerate# Install the transformers librarypip install transformers

Load the Models

For each target model (one of the main models in the Gemma 4 model), there is an assistant to helps speed up inference. As such, you will load two models:

- Target (e.g.,

google/gemma-4-E2B-it): The full Gemma 4 target model - Drafter (e.g.,

google/gemma-4-E2B-it-assistant): The lightweight 4-layer MTP drafter that proposes candidate tokens

Note that the drafter is often referred to as the assistant since the model helps the larger model in choosing which tokens to predict.

Use the transformers libraries to create an instance of a processor and model using the AutoProcessor and AutoModelForCausalLM classes as shown in the following code example:

TARGET_MODEL_ID = "google/gemma-4-E2B-it" # @param ["google/gemma-4-E2B-it","google/gemma-4-E4B-it", "google/gemma-4-31B-it", "google/gemma-4-26B-A4B-it"]

ASSISTANT_MODEL_ID = TARGET_MODEL_ID + "-assistant"

import torch

from transformers import AutoProcessor, AutoModelForCausalLM

# Target Model

processor = AutoProcessor.from_pretrained(TARGET_MODEL_ID)

target_model = AutoModelForCausalLM.from_pretrained(

TARGET_MODEL_ID,

torch_dtype=torch.bfloat16,

device_map="auto",

)

# Assistant Model (the drafter)

assistant_model = AutoModelForCausalLM.from_pretrained(

ASSISTANT_MODEL_ID,

torch_dtype=torch.bfloat16,

device_map="auto",

)

[transformers] `torch_dtype` is deprecated! Use `dtype` instead! Loading weights: 0%| | 0/1951 [00:00<?, ?it/s] Loading weights: 0%| | 0/50 [00:00<?, ?it/s]

Gemma 4 with the Assistant

Using an assistant in transformers is fortunately quite straightforward and requires you to pass the assistant model to the model.generate function:

# Process inputs with the `target_model`

messages = [

{

"role": "user",

"content": "Explain the concepts of speculative decoding and MTP in 3 sentences."

}

]

input_text = processor.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

inputs = processor(text=input_text, return_tensors="pt").to(target_model.device)

# `assistant_model=assistant_model` is all you need to enable MTP!

outputs = target_model.generate(

**inputs,

assistant_model=assistant_model,

max_new_tokens=256,

do_sample=False,

)

# Decode the response into text

response = processor.decode(outputs[0][inputs["input_ids"].shape[1]:], skip_special_tokens=True)

print(response)

**Speculative decoding** is a technique where a smaller, faster language model (the "draft model") generates several candidate tokens, which are then quickly verified by a larger, more accurate model to produce a final, high-quality output much faster than decoding the large model alone. **MTP (Multi-Task Prediction)** involves training a single model to perform multiple related tasks simultaneously, allowing it to leverage shared knowledge across different objectives. Together, these methods aim to significantly accelerate the inference speed of large language models while maintaining or improving output quality.

Under the hood, the process is as follows:

- The drafter proposes N tokens generated autoregressively

- The target model verifies all N tokens in one forward pass

- Drafted tokens with high probabilities are accepted

- Drafted tokens with low probabilities are rejected

- Since the target model does a forward pass, it will always generated 1 token by itself regardless of how many drafted tokens were accepted or rejected

Draft Tokens

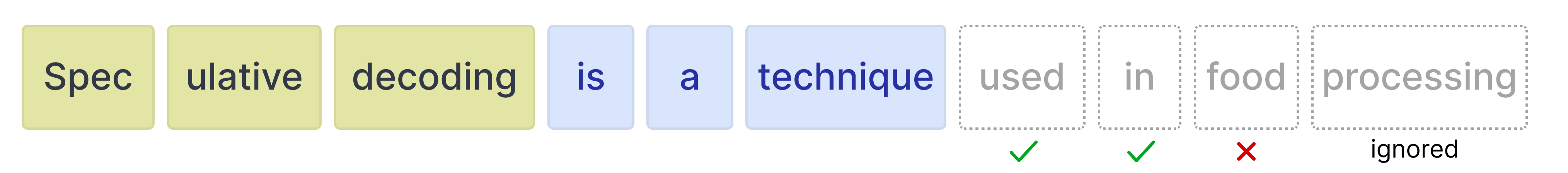

The drafter can generate any amount of tokens for the target model to verify. However, the target model can still choose to reject certain tokens. When it does, all tokens after that are ignored.

As such, it is important to know the tradeoff when using various values for the number of drafted tokens.

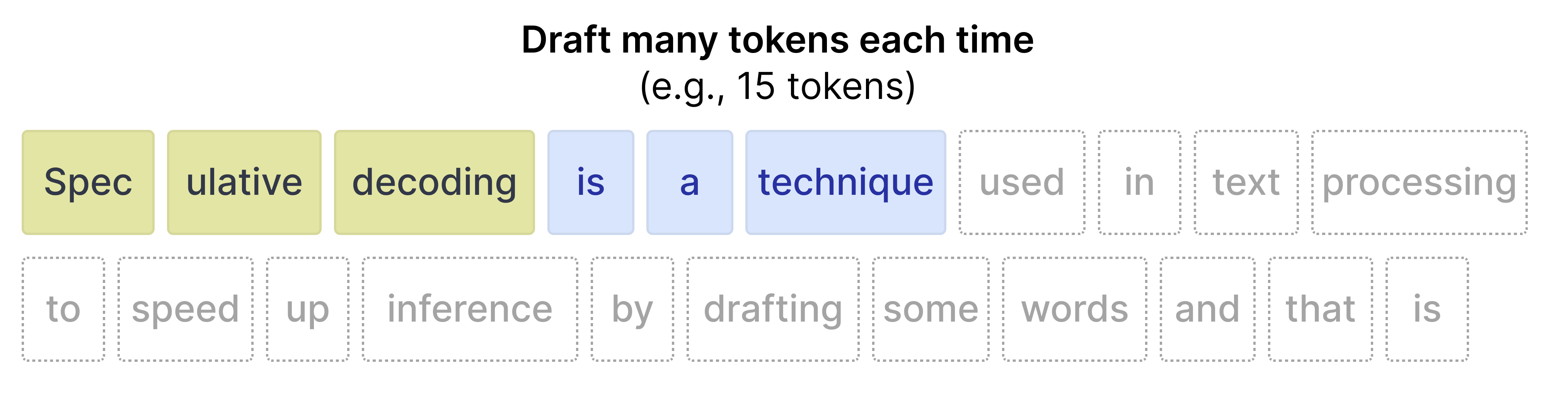

More draft tokens

When you draft many tokens (for instance 15), then there is a high chance that not all tokens will be accepted. As such, there is a higher potential for wasted compute. In contrast, it does have a tendency to speed up inference when the acceptance rate is high.

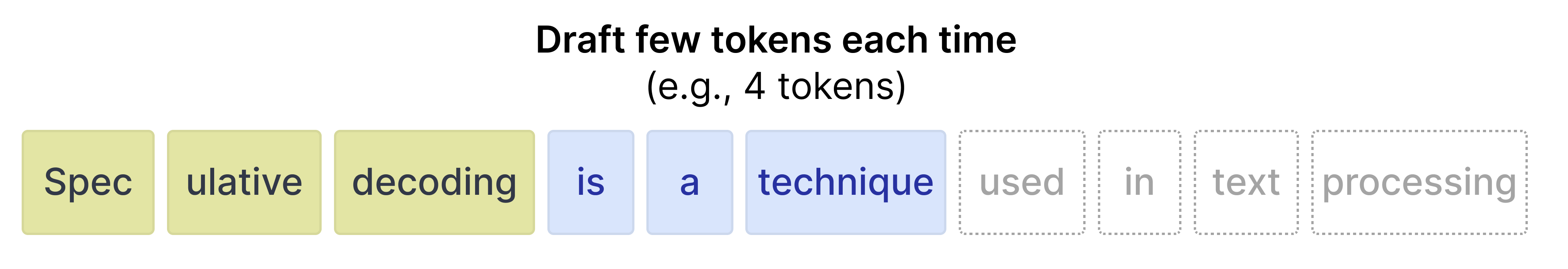

Fewer draft tokens

When you draft fewer tokens, the acceptance rate tends to be higher since tokens that are closer in position to the initial prompt are more accurate. However, since only a few tokens are drafted, the speed up that you would get from a faster drafter model is reduced.

Fortunately, you do not have to experiment with the best values for your use case in transformers since you can set the num_assistant_tokens_schedule to "heuristic" which will automatically adapt the number of drafted tokens at runtime:

- All tokens accepted -- Increase the number of tokens to draft by 2 since the drafter is quite accurate for the prompt. Increasing the number of tokens drafted might result in a speed up if those tokens are also accepted.

- Any tokens rejected -- If any tokens are rejected, reduce the amount of tokens to draft by 1. Reducing the number of tokens makes it so that not too many drafted are wasted if the target model continues to reject most of the tokens.

Likewise, you can update number of draft tokens by updating num_assistant_tokens in the drafter like so:

# Update how many draft tokens are generated at the start of inference

assistant_model.generation_config.num_assistant_tokens = 4

# Update how the number of draft tokens are updated ("heuristic" for a dynamic schedule and "constant" for a constant schedule)

assistant_model.generation_config.num_assistant_tokens_schedule = "heuristic"

# Run with MTP

outputs = target_model.generate(

**inputs,

assistant_model=assistant_model,

max_new_tokens=256,

do_sample=False,

)

# Decode the response into text

response = processor.decode(outputs[0][inputs["input_ids"].shape[1]:], skip_special_tokens=True)

print(response)

**Speculative decoding** is a technique where a smaller, faster language model (the "draft model") generates several candidate tokens, which are then verified by a larger, more accurate model to quickly produce a high-quality output. **MTP (Multi-Task Prediction)** involves training a single model to perform multiple related tasks simultaneously, allowing it to leverage shared knowledge across different objectives. Together, these methods aim to significantly speed up the inference process of large language models while maintaining or improving output quality.