Nano Banana image generation

- Or build your own from prompts:

-

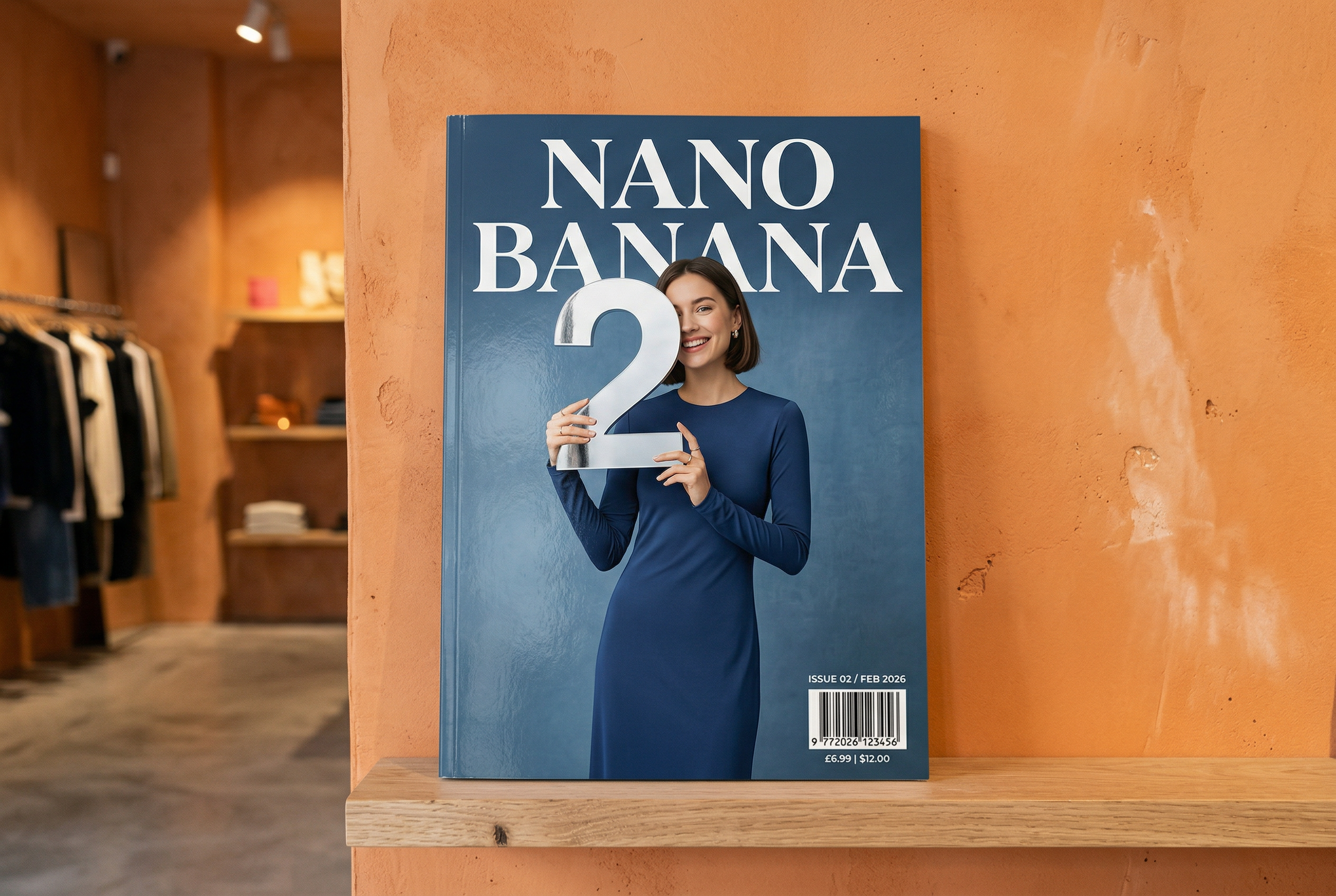

Generated by Nano Banana 2 Prompt: "A photo of a glossy magazine cover, the minimal blue cover has the large bold words Nano Banana. The text is in a serif font and fills the view. No other text. In front of the text there is a portrait of a person in a sleek and minimal dress. She is playfully holding the number 2, which is the focal point.

Put the issue number and "Feb 2026" date in the corner along with a barcode. The magazine is on a shelf against an orange plastered wall, within a designer store." -

Generated by Nano Banana Pro Prompt: "Present a clear, 45° top-down isometric miniature 3D cartoon scene of London, featuring its most iconic landmarks and architectural elements. Use soft, refined textures with realistic PBR materials and gentle, lifelike lighting and shadows. Integrate the current weather conditions directly into the city environment to create an immersive atmospheric mood. Use a clean, minimalistic composition with a soft, solid-colored background. At the top-center, place the title "London" in large bold text, a prominent weather icon beneath it, then the date (small text) and temperature (medium text). All text must be centered with consistent spacing, and may subtly overlap the tops of the buildings." -

Generated by Nano Banana 2 Prompt: "Use image search to find accurate images of a resplendent quetzal bird. Create a beautiful 3:2 wallpaper of this bird, with a natural top to bottom gradient and minimal composition." -

Generated by Nano Banana Pro Prompt: "Put this logo on a high-end ad for a banana scented perfume. The logo is perfectly integrated into the bottle." -

Generated by Nano Banana Pro Prompt: "A photo of an everyday scene at a busy cafe serving breakfast. In the foreground is an anime man with blue hair, one of the people is a pencil sketch, another is a claymation person" -

Generated by Nano Banana Pro Prompt: "Use search to find how the Gemini 3 Flash launch has been received. Use this information to write a short article about it (with headings). Return a photo of the article as it appeared in a design focused glossy magazine. It is a photo of a single folded over page, showing the article about Gemini 3 Flash. One hero photo. Headline in serif." -

Generated by Nano Banana Pro Prompt: "An icon representing a cute dog. The background is white. Make the icons in a colorful and tactile 3D style. No text." -

Generated by Nano Banana 2 Prompt: "Make a photo that is perfectly isometric. It is not a miniature, it is a captured photo that just happened to be perfectly isometric. It is a photo of a beautiful modern garden. There's a large 2 shaped pool and the words: Nano Banana 2."

Nano Banana is the name for Gemini's native image generation capabilities. Gemini can generate and process images conversationally with text, images, or a combination of both. This lets you create, edit, and iterate on visuals with unprecedented control.

Nano Banana refers to two distinct models available in the Gemini API:

- Nano Banana 2: The Gemini 3.1 Flash Image Preview

model (

gemini-3.1-flash-image-preview). This model serves as the high-efficiency counterpart to Gemini 3 Pro Image, optimized for speed and high-volume developer use cases. - Nano Banana Pro: The Gemini 3 Pro Image Preview model

(

gemini-3-pro-image-preview). This model is designed for professional asset production, utilizing advanced reasoning ("Thinking") to follow complex instructions and render high-fidelity text. - Nano Banana: The Gemini 2.5 Flash Image model

(

gemini-2.5-flash-image). This model is designed for speed and efficiency, optimized for high-volume, low-latency tasks.

All generated images include a SynthID watermark.

Image generation (text-to-image)

Python

from google import genai

from google.genai import types

from PIL import Image

import base64

client = genai.Client()

prompt = ("Create a picture of a nano banana dish in a fancy restaurant with a Gemini theme")

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input=[prompt],

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("generated_image.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const prompt =

"Create a picture of a nano banana dish in a fancy restaurant with a Gemini theme";

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: prompt,

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const imageData = contentBlock.data;

const buffer = Buffer.from(imageData, "base64");

fs.writeFileSync("gemini-native-image.png", buffer);

console.log("Image saved as gemini-native-image.png");

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": [

{"type": "text", "text": "Create a picture of a nano banana dish in a fancy restaurant with a Gemini theme"}

]

}'

Image editing (text-and-image-to-image)

Reminder: Make sure you have the necessary rights to any images you upload. Don't generate content that infringe on others' rights, including videos or images that deceive, harass, or harm. Your use of this generative AI service is subject to our Prohibited Use Policy.

Provide an image and use text prompts to add, remove, or modify elements, change the style, or adjust the color grading.

The following example demonstrates uploading base64 encoded images.

For multiple images, larger payloads, and supported MIME types, check the Image

understanding page.

Python

from google import genai

from google.genai import types

from PIL import Image

import base64

client = genai.Client()

prompt = (

"Create a picture of my cat eating a nano-banana in a "

"fancy restaurant under the Gemini constellation",

)

image = Image.open("/path/to/cat_image.png")

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input=[prompt, image],

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("generated_image.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const imagePath = "path/to/cat_image.png";

const imageData = fs.readFileSync(imagePath);

const base64Image = imageData.toString("base64");

const prompt = [

{ text: "Create a picture of my cat eating a nano-banana in a" +

"fancy restaurant under the Gemini constellation" },

{

type: "image",

mimeType: "image/png",

data: base64Image

},

];

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: prompt,

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const imageData = contentBlock.data;

const buffer = Buffer.from(imageData, "base64");

fs.writeFileSync("gemini-native-image.png", buffer);

console.log("Image saved as gemini-native-image.png");

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H 'Content-Type: application/json' \

-d "{

\"model\": \"gemini-3.1-flash-image-preview\",

\"input\": [

{\"type\": \"text\", \"text\": \"Create a picture of my cat eating a nano-banana in a fancy restaurant under the Gemini constellation\"},

{

\"type\": \"image\",

\"mime_type\": \"image/jpeg\",

\"data\": \"<BASE64_IMAGE_DATA>\"

}

]

}"

Multi-turn image editing

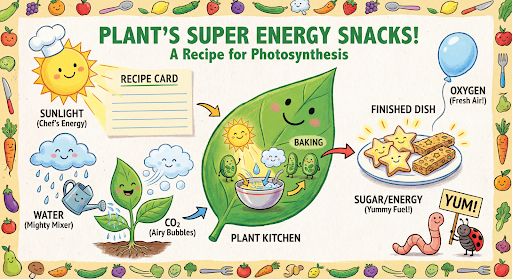

Keep generating and editing images conversationally. Multi-turn conversation is the recommended way to iterate on images. The following example shows a prompt to generate an infographic about photosynthesis.

Python

from google import genai

from google.genai import types

import base64

client = genai.Client()

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input="Create a vibrant infographic that explains photosynthesis as if it were a recipe for a plant's favorite food. Show the \"ingredients\" (sunlight, water, CO2) and the \"finished dish\" (sugar/energy). The style should be like a page from a colorful kids' cookbook, suitable for a 4th grader.",

tools=[{"google_search": {}}],

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("photosynthesis.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

const ai = new GoogleGenAI({});

async function main() {

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: "Create a vibrant infographic that explains photosynthesis as if it were a recipe for a plant's favorite food. Show the \"ingredients\" (sunlight, water, CO2) and the \"finished dish\" (sugar/energy). The style should be like a page from a colorful kids' cookbook, suitable for a 4th grader.",

tools: [{googleSearch: {}}],

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const imageData = contentBlock.data;

const buffer = Buffer.from(imageData, "base64");

fs.writeFileSync("photosynthesis.png", buffer);

console.log("Image saved as photosynthesis.png");

}

}

}

}

}

await main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": [{

"parts": [

{"text": "Create a vibrant infographic that explains photosynthesis as if it were a recipe for a plants favorite food. Show the \"ingredients\" (sunlight, water, CO2) and the \"finished dish\" (sugar/energy). The style should be like a page from a colorful kids cookbook, suitable for a 4th grader."}

]

}],

"tools": [{"google_search": {}}]

}'

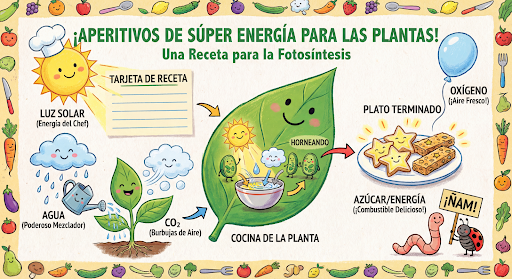

You can then use the previous_interaction_id to change the language on the graphic to Spanish.

Python

interaction_2 = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input="Update this infographic to be in Spanish. Do not change any other elements of the image.",

previous_interaction_id=interaction.id,

response_format={

"type": "image",

"mime_type": "image/png",

"aspect_ratio": "16:9",

"image_size": "2K"

},

)

for step in interaction_2.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("photosynthesis_spanish.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

const interaction2 = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: "Update this infographic to be in Spanish. Do not change any other elements of the image.",

previousInteractionId: interaction.id,

response_format: {

type: "image",

mime_type: "image/png",

aspect_ratio: "16:9",

image_size: "2K"

},

});

for (const step of interaction2.steps) {

if (step.type === "text") {

console.log(step.text);

} else if (step.type === "image") {

const buffer = Buffer.from(step.data, "base64");

fs.writeFileSync("photosynthesis_spanish.png", buffer);

}

}

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H 'Content-Type: application/json' \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": [{

"parts": [{"text": "Update this infographic to be in Spanish. Do not change any other elements of the image."}]

}],

"previous_interaction_id": "<PREVIOUS_INTERACTION_ID>",

"response_format": {

"type": "image",

"mime_type": "image/png",

"aspect_ratio": "16:9",

"image_size": "2K"

}

}'

New with Gemini 3 Image models

Gemini 3 offers state-of-the-art image generation and editing models. Gemini 3.1 Flash Image is optimized for speed and high-volume use-cases, and Gemini 3 Pro Image is optimized for professional asset production. Designed to tackle the most challenging workflows through advanced reasoning, they excel at complex, multi-turn creation and modification tasks.

- High-resolution output: Built-in generation capabilities for 1K, 2K, and 4K visuals.

- Gemini 3.1 Flash Image adds the smaller 512px (0.5K) resolution.

- Advanced text rendering: Capable of generating legible, stylized text for infographics, menus, diagrams, and marketing assets.

- Grounding with Google Search: The model can use Google Search as a tool to

verify facts and generate imagery based on real-time data (e.g., current

weather maps, stock charts, recent events).

- Gemini 3.1 Flash Image adds the integration of Google Image Search Grounding alongside Web Search.

- Thinking mode: The model utilizes a "thinking" process to reason through complex prompts. It generates interim "thought images" (visible in the backend but not charged) to refine the composition before producing the final high-quality output.

- Up to 14 reference images: You can now mix up to 14 reference images to produce the final image.

- New aspect ratios: Gemini 3.1 Flash Image Preview adds 1:4, 4:1, 1:8, and 8:1 aspect ratios.

Use up to 14 reference images

Gemini 3 image models let you to mix up to 14 reference images. These 14 images can include the following:

| Gemini 3.1 Flash Image Preview | Gemini 3 Pro Image Preview |

|---|---|

| Up to 10 images of objects with high-fidelity to include in the final image | Up to 6 images of objects with high-fidelity to include in the final image |

| Up to 4 images of characters to maintain character consistency | Up to 5 images of characters to maintain character consistency |

Python

from google import genai

from google.genai import types

from PIL import Image

import base64

prompt = "An office group photo of these people, they are making funny faces."

client = genai.Client()

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input=[

prompt,

Image.open('person1.png'),

Image.open('person2.png'),

Image.open('person3.png'),

Image.open('person4.png'),

Image.open('person5.png'),

],

response_format={

"image": {

"aspect_ratio": "5:4",

"image_size": "2K"

}

},

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("office.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const input = [

{ text: "An office group photo of these people, they are making funny faces." },

{ type: "image", mimeType: "image/jpeg", data: base64ImageFile1 },

{ type: "image", mimeType: "image/jpeg", data: base64ImageFile2 },

{ type: "image", mimeType: "image/jpeg", data: base64ImageFile3 },

{ type: "image", mimeType: "image/jpeg", data: base64ImageFile4 },

{ type: "image", mimeType: "image/jpeg", data: base64ImageFile5 },

];

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: input,

responseFormat: { image: { aspectRatio: "5:4", imageSize: "2K" } },

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("office.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H 'Content-Type: application/json' \

-d "{

\"model\": \"gemini-3.1-flash-image-preview\",

\"input\": [

{\"type\": \"text\", \"text\": \"An office group photo of these people, they are making funny faces.\"},

{\"type\": \"image\", \"mime_type\": \"image/png\", \"data\": \"<BASE64_DATA_IMG_1>\"},

{\"type\": \"image\", \"mime_type\": \"image/png\", \"data\": \"<BASE64_DATA_IMG_2>\"},

{\"type\": \"image\", \"mime_type\": \"image/png\", \"data\": \"<BASE64_DATA_IMG_3>\"},

{\"type\": \"image\", \"mime_type\": \"image/png\", \"data\": \"<BASE64_DATA_IMG_4>\"},

{\"type\": \"image\", \"mime_type\": \"image/png\", \"data\": \"<BASE64_DATA_IMG_5>\"}

],

\"response_format\": {

\"image\": {

\"aspect_ratio\": \"5:4\",

\"image_size\": \"2K\"

}

}

}"

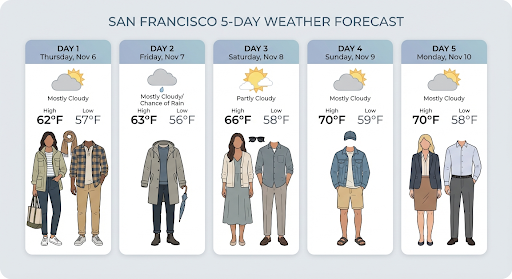

Grounding with Google Search

Use the Google Search tool to generate images based on real-time information, such as weather forecasts, stock charts, or recent events.

Note that when using Grounding with Google Search with image generation, image-based search results are not passed to the generation model and are excluded from the response (see Grounding with Google Image Search)

Python

from google import genai

from google.genai import types

import base64

prompt = "Visualize the current weather forecast for the next 5 days in San Francisco as a clean, modern weather chart. Add a visual on what I should wear each day"

client = genai.Client()

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input=prompt,

tools=[{"google_search": {}}],

response_format={

"type": "image",

"mime_type": "image/png",

"aspect_ratio": "16:9"

},

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("weather.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: "Visualize the current weather forecast for the next 5 days in San Francisco as a clean, modern weather chart. Add a visual on what I should wear each day",

tools: [{ googleSearch: {} }],

response_format: {

type: "image",

mime_type: "image/png",

aspect_ratio: "16:9",

image_size: "2K"

},

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("weather.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": [

{"type": "text", "text": "Visualize the current weather forecast for the next 5 days in San Francisco as a clean, modern weather chart. Add a visual on what I should wear each day"}

],

"tools": [{"google_search": {}}],

"response_format": {

"type": "image",

"mime_type": "image/png",

"aspect_ratio": "16:9"

}

The response includes google_search_call and google_search_result steps,

along with inline url_citation annotations on the text step:

google_search_result: Containssearch_suggestions, an HTML snippet for rendering search suggestions in your UI.url_citationannotations: Inline citations on the text step linking parts of the response to their web sources.

Grounding with Google Search for Images (3.1 Flash)

Grounding with Google Image Search allows models to use web images retrieved via Google Image Search as visual context for image generation. Image Search is a new search type within the existing Grounding with Google Search tool, functioning alongside standard Web Search.

To enable Image Search, configure the google_search tool in your API request

and specify image_search within the search_types array. Image Search can be

used independently or together with Web Search.

Python

from google import genai

client = genai.Client()

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input="A detailed painting of a Timareta butterfly resting on a flower",

tools=[{

"google_search": {

"search_types": ["web_search", "image_search"]

}

}]

)

JavaScript

import { GoogleGenAI } from "@google/genai";

async function main() {

const ai = new GoogleGenAI({});

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: "A detailed painting of a Timareta butterfly resting on a flower",

tools: [{

googleSearch: {

searchTypes: ["web_search", "image_search"]

}

}]

});

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": "A detailed painting of a Timareta butterfly resting on a flower",

"tools": [{"type": "google_search", "search_types": ["web_search", "image_search"]}]

}'

Display requirements

When you use Image Search within Grounding with Google Search, you must display

the search_suggestions from the google_search_result step. Full usage

requirements are detailed in the

Terms of Service.

Response

For grounded responses using image search, the API returns inline citations and attribution metadata as part of the response steps:

url_citationannotations: Inline citations on the text content block withinmodel_output, linking the generated content to its source.google_search_result: Containssearch_suggestions, an HTML snippet for rendering search suggestions in your UI.

Generate images up to 4K resolution

Gemini 3 image models generate 1K images by default but can also output 2K,

4K, and 512px (05.K) (Gemini 3.1 Flash Image only) images. To generate higher

resolution assets, specify the image_size in the response_format.

You must use an uppercase 'K' (e.g. 512px (05.K), 1K, 2K, 4K). Lowercase parameters (e.g., 1k) will be rejected.

Python

from google import genai

from google.genai import types

import base64

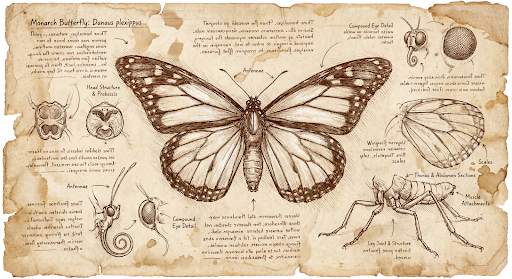

prompt = "Da Vinci style anatomical sketch of a dissected Monarch butterfly. Detailed drawings of the head, wings, and legs on textured parchment with notes in English."

client = genai.Client()

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input=prompt,

response_format=[

{

"type": "image",

"mime_type": "image/png",

"aspect_ratio": "1:1",

"image_size": "1K"

}

],

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("butterfly.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: "Da Vinci style anatomical sketch of a dissected Monarch butterfly. Detailed drawings of the head, wings, and legs on textured parchment with notes in English.",

response_format: [

{

type: "image",

mime_type: "image/png",

aspect_ratio: "1:1",

image_size: "1K",

}

],

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("butterfly.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": [{"parts": [{"text": "Da Vinci style anatomical sketch of a dissected Monarch butterfly. Detailed drawings of the head, wings, and legs on textured parchment with notes in English."}]}],

"response_format": [

{

"type": "image",

"mime_type": "image/png",

"aspect_ratio": "1:1",

"image_size": "1K"

}

]

}'

The following is an example image generated from this prompt:

Thinking Process

Gemini 3 image models are thinking models that use a reasoning process ("Thinking") for complex prompts. This feature is enabled by default and cannot be disabled in the API. To learn more about the thinking process, see the Gemini Thinking guide.

The model generates up to two interim images to test composition and logic. The last image within Thinking is also the final rendered image.

You can check the thoughts that lead to the final image being produced.

Python

for step in interaction.steps:

if step.type == "thought":

for content_block in step.summary:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

image = Image.open(io.BytesIO(base64.b64decode(content_block.data)))

image.show()

JavaScript

for (const step of interaction.steps) {

if (step.type === "thought") {

for (const contentBlock of step.summary) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, 'base64');

fs.writeFileSync('thought_image.png', buffer);

}

}

}

}

Controlling thinking levels

With Gemini 3.1 Flash Image, you can control the amount of thinking the model

uses to balance quality and latency. The default thinkingLevel is minimal,

and the supported levels are minimal and high.

You can add the includeThoughts boolean to determine whether the model's

generated thoughts are returned in the response, or remain hidden.

Python

from google import genai

from google.genai import types

import base64

import io

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input="A futuristic city built inside a giant glass bottle floating in space",

generation_config={"thinking_level": "High"},

)

for step in interaction.steps:

if step.type == "thought":

continue

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

image = Image.open(io.BytesIO(base64.b64decode(content_block.data)))

image.show()

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: "A futuristic city built inside a giant glass bottle floating in space",

generationConfig: { thinkingLevel: "High" },

});

for (const step of interaction.steps) {

if (step.type === "thought") continue;

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("image.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": [{"parts": [{"text": "A futuristic city built inside a giant glass bottle floating in space"}]}],

"generation_config": {

"thinking_level": "High"

}

}'

Note that thinking tokens are billed regardless of whether includeThoughts is

set to true or false, as the thinking process always

happens by default whether you view the process or not.

Other image generation modes

Although Nano Banana image generation models are recommended for most use cases, you can also explore dedicated image generation models:

- Imagen: Google's text-to-image models optimized for generating high-quality images.

- Veo: Google's video generation model.

Generate images in batch

All of the image generation capabilities described on this page can also be run as batch jobs using the Batch API.

Prompting guide and strategies

This section provides prompt examples and templates for common image generation and editing workflows. Each example includes a re-usable template and a sample prompt for the Interactions API.

Prompts for generating images

The following examples show how to use text prompts to generate various types of images.

1. Photorealistic scenes

Describe a scene in rich detail. The more specific you are, the more control you have over the results.

Template

A photorealistic [type of shot] of a [subject description] in a [setting

description]. [Description of the light]. Shot from a [camera angle]

with a [lens type].

Prompt

A photorealistic wide-angle shot of a vibrant coral reef teeming with tropical fish. Crystal-clear turquoise water with sunbeams filtering down from the surface, illuminating a sea turtle gliding gracefully over the coral. Shot from a low perspective with a wide-angle lens. Aspect ratio 16:9.

Python

from google import genai

from google.genai import types

import base64

client = genai.Client()

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input="A photorealistic wide-angle shot of a vibrant coral reef teeming with tropical fish. Crystal-clear turquoise water with sunbeams filtering down from the surface, illuminating a sea turtle gliding gracefully over the coral. Shot from a low perspective with a wide-angle lens. Aspect ratio 16:9.",

response_format=[

{

"type": "image",

"mime_type": "image/png",

"aspect_ratio": "16:9",

}

],

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("coral_reef.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: "A photorealistic wide-angle shot of a vibrant coral reef teeming with tropical fish. Crystal-clear turquoise water with sunbeams filtering down from the surface, illuminating a sea turtle gliding gracefully over the coral. Shot from a low perspective with a wide-angle lens. Aspect ratio 16:9.",

response_format: [

{

type: "image",

mime_type: "image/png",

aspect_ratio: "16:9",

}

],

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("coral_reef.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": [{"parts": [{"text": "A photorealistic wide-angle shot of a vibrant coral reef teeming with tropical fish. Crystal-clear turquoise water with sunbeams filtering down from the surface, illuminating a sea turtle gliding gracefully over the coral. Shot from a low perspective with a wide-angle lens. Aspect ratio 16:9."}]}],

"response_format": {

"type": "image",

"mime_type": "image/png",

"aspect_ratio": "16:9"

}

}'

2. Stylized illustrations & stickers

Describe the artistic style, subject, and medium. Be specific about the visual detail (bold lines, colors, etc.) for consistent results.

Template

A [style] of a [subject, with details about accessories or actions]

doing [activity]. The design features [visual qualities, e.g., bold outlines,

cel-shading, etc.] and [color/background preference].

Prompt

A kawaii-style sticker of a happy red panda wearing a tiny bamboo hat. It's munching on a green bamboo leaf. The design features bold, clean outlines, simple cel-shading, and a vibrant color palette. The background must be white.

Python

from google import genai

import base64

client = genai.Client()

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input="A kawaii-style sticker of a happy red panda wearing a tiny bamboo hat. It's munching on a green bamboo leaf. The design features bold, clean outlines, simple cel-shading, and a vibrant color palette. The background must be white.",

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("red_panda_sticker.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: "A kawaii-style sticker of a happy red panda wearing a tiny bamboo hat. It's munching on a green bamboo leaf. The design features bold, clean outlines, simple cel-shading, and a vibrant color palette. The background must be white.",

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("red_panda_sticker.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": [{"parts": [{"text": "A kawaii-style sticker of a happy red panda wearing a tiny bamboo hat. It is munching on a green bamboo leaf. The design features bold, clean outlines, simple cel-shading, and a vibrant color palette. The background must be white."}]}]

}'

3. Accurate text in images

Gemini excels at rendering text. Be clear about the text, the font style (descriptively), and the overall design. Use Gemini 3 Pro Image Preview for professional asset production.

Template

Create a [image type] for [brand/concept] with the text "[text to render]"

in a [font style]. The design should be [style description], with a

[color scheme].

Prompt

Create a modern, minimalist logo for a coffee shop called 'The Daily Grind'. The text should be in a clean, bold, sans-serif font. The color scheme is black and white. Put the logo in a circle. Use a coffee bean in a clever way.

Python

from google import genai

import base64

client = genai.Client()

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input="Create a modern, minimalist logo for a coffee shop called 'The Daily Grind'. The text should be in a clean, bold, sans-serif font. The color scheme is black and white. Put the logo in a circle. Use a coffee bean in a clever way.",

response_format={"type": "image", "aspect_ratio": "1:1"},

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("logo_example.jpg", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: "Create a modern, minimalist logo for a coffee shop called 'The Daily Grind'. The text should be in a clean, bold, sans-serif font. The color scheme is black and white. Put the logo in a circle. Use a coffee bean in a clever way.",

responseFormat: { type: "image", aspectRatio: "1:1" },

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("logo_example.jpg", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": [{"parts": [{"text": "Create a modern, minimalist logo for a coffee shop called The Daily Grind. The text should be in a clean, bold, sans-serif font. The color scheme is black and white. Put the logo in a circle. Use a coffee bean in a clever way."}]}],

"response_format": {

"type": "image",

"aspect_ratio": "1:1"

}

}'

4. Product mockups & commercial photography

Perfect for creating clean, professional product shots for ecommerce, advertising, or branding.

Template

A high-resolution, studio-lit product photograph of a [product description]

on a [background surface/description]. The lighting is a [lighting setup,

e.g., three-point softbox setup] to [lighting purpose]. The camera angle is

a [angle type] to showcase [specific feature]. Ultra-realistic, with sharp

focus on [key detail]. [Aspect ratio].

Prompt

A high-resolution, studio-lit product photograph of a minimalist ceramic

coffee mug in matte black, presented on a polished concrete surface. The

lighting is a three-point softbox setup designed to create soft, diffused

highlights and eliminate harsh shadows. The camera angle is a slightly

elevated 45-degree shot to showcase its clean lines. Ultra-realistic, with

sharp focus on the steam rising from the coffee. Square image.

Python

from google import genai

import base64

client = genai.Client()

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input="A high-resolution, studio-lit product photograph of a minimalist ceramic coffee mug in matte black, presented on a polished concrete surface. The lighting is a three-point softbox setup designed to create soft, diffused highlights and eliminate harsh shadows. The camera angle is a slightly elevated 45-degree shot to showcase its clean lines. Ultra-realistic, with sharp focus on the steam rising from the coffee. Square image.",

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("product_mockup.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: "A high-resolution, studio-lit product photograph of a minimalist ceramic coffee mug in matte black, presented on a polished concrete surface. The lighting is a three-point softbox setup designed to create soft, diffused highlights and eliminate harsh shadows. The camera angle is a slightly elevated 45-degree shot to showcase its clean lines. Ultra-realistic, with sharp focus on the steam rising from the coffee. Square image.",

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("product_mockup.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": [{"parts": [{"text": "A high-resolution, studio-lit product photograph of a minimalist ceramic coffee mug in matte black, presented on a polished concrete surface. The lighting is a three-point softbox setup designed to create soft, diffused highlights and eliminate harsh shadows. The camera angle is a slightly elevated 45-degree shot to showcase its clean lines. Ultra-realistic, with sharp focus on the steam rising from the coffee. Square image."}]}]

}'

5. Minimalist & negative space design

Excellent for creating backgrounds for websites, presentations, or marketing materials where text will be overlaid.

Template

A minimalist composition featuring a single [subject] positioned in the

[bottom-right/top-left/etc.] of the frame. The background is a vast, empty

[color] canvas, creating significant negative space. Soft, subtle lighting.

[Aspect ratio].

Prompt

A minimalist composition featuring a single, delicate red maple leaf

positioned in the bottom-right of the frame. The background is a vast, empty

off-white canvas, creating significant negative space for text. Soft,

diffused lighting from the top left. Square image.

Python

from google import genai

import base64

client = genai.Client()

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input="A minimalist composition featuring a single, delicate red maple leaf positioned in the bottom-right of the frame. The background is a vast, empty off-white canvas, creating significant negative space for text. Soft, diffused lighting from the top left. Square image.",

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("minimalist_design.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: "A minimalist composition featuring a single, delicate red maple leaf positioned in the bottom-right of the frame. The background is a vast, empty off-white canvas, creating significant negative space for text. Soft, diffused lighting from the top left. Square image.",

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("minimalist_design.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": [{"parts": [{"text": "A minimalist composition featuring a single, delicate red maple leaf positioned in the bottom-right of the frame. The background is a vast, empty off-white canvas, creating significant negative space for text. Soft, diffused lighting from the top left. Square image."}]}]

}'

6. Sequential art (Comic panel / Storyboard)

Builds on character consistency and scene description to create panels for visual storytelling. For accuracy with text and storytelling ability, these prompts work best with Gemini 3 Pro and Gemini 3.1 Flash Image Preview.

Template

Make a 3 panel comic in a [style]. Put the character in a [type of scene].

Prompt

Make a 3 panel comic in a gritty, noir art style with high-contrast black and white inks. Put the character in a humurous scene.

Python

from google import genai

from PIL import Image

import base64

client = genai.Client()

image_input = Image.open('/path/to/your/man_in_white_glasses.jpg')

text_input = "Make a 3 panel comic in a gritty, noir art style with high-contrast black and white inks. Put the character in a humurous scene."

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input=[text_input, image_input],

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("comic_panel.jpg", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const imagePath = "/path/to/your/man_in_white_glasses.jpg";

const imageData = fs.readFileSync(imagePath);

const base64Image = imageData.toString("base64");

const input = [

{text: "Make a 3 panel comic in a gritty, noir art style with high-contrast black and white inks. Put the character in a humurous scene."},

{ inlineData: { mimeType: "image/jpeg", data: base64Image } },

];

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: input,

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("comic_panel.jpg", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": [{"parts": [

{"text": "Make a 3 panel comic in a gritty, noir art style with high-contrast black and white inks. Put the character in a humurous scene."},

{"inline_data": {"mime_type": "image/jpeg", "data": "<BASE64_IMAGE_DATA>"}}

]}]

}'

Input |

Output |

|

|

7. Grounding with Google Search

Use Google Search to generate images based on recent or real-time information. This is useful for news, weather, and other time-sensitive topics.

Prompt

Make a simple but stylish graphic of last night's Arsenal game in the Champion's League

Python

from google import genai

from google.genai import types

import base64

client = genai.Client()

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input="Make a simple but stylish graphic of last night's Arsenal game in the Champion's League",

tools=[{"google_search": {}}],

response_format={"type": "image", "aspect_ratio": "16:9"},

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("football-score.jpg", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: "Make a simple but stylish graphic of last night's Arsenal game in the Champion's League",

tools: [{ googleSearch: {} }],

responseFormat: { type: "image", aspectRatio: "16:9", imageSize: "2K" },

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("football-score.jpg", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "gemini-3.1-flash-image-preview",

"input": [{"parts": [{"text": "Make a simple but stylish graphic of last nights Arsenal game in the Champions League"}]}],

"tools": [{"google_search": {}}],

"response_format": {

"type": "image",

"aspect_ratio": "16:9"

}

}'

Prompts for editing images

These examples show how to provide images alongside your text prompts for editing, composition, and style transfer.

1. Adding and removing elements

Provide an image and describe your change. The model will match the original image's style, lighting, and perspective.

Template

Using the provided image of [subject], please [add/remove/modify] [element]

to/from the scene. Ensure the change is [description of how the change should

integrate].

Prompt

"Using the provided image of my cat, please add a small, knitted wizard hat

on its head. Make it look like it's sitting comfortably and matches the soft

lighting of the photo."

Python

from google import genai

from PIL import Image

import base64

client = genai.Client()

image_input = Image.open('/path/to/your/cat_photo.png')

text_input = """Using the provided image of my cat, please add a small, knitted wizard hat on its head. Make it look like it's sitting comfortably and not falling off."""

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input=[text_input, image_input],

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("cat_with_hat.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const imagePath = "/path/to/your/cat_photo.png";

const imageData = fs.readFileSync(imagePath);

const base64Image = imageData.toString("base64");

const input = [

{ text: "Using the provided image of my cat, please add a small, knitted wizard hat on its head. Make it look like it's sitting comfortably and not falling off." },

{ inlineData: { mimeType: "image/png", data: base64Image } },

];

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: input,

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("cat_with_hat.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H 'Content-Type: application/json' \

-d "{

\"model\": \"gemini-3.1-flash-image-preview\",

\"input\": [{

\"parts\":[

{\"text\": \"Using the provided image of my cat, please add a small, knitted wizard hat on its head. Make it look like it's sitting comfortably and not falling off.\"},

{\"inline_data\": {\"mime_type\":\"image/png\", \"data\": \"<BASE64_IMAGE_DATA>\"}}

]

}]

}"

Input |

Output |

|

|

2. Inpainting (Semantic masking)

Conversationally define a "mask" to edit a specific part of an image while leaving the rest untouched.

Template

Using the provided image, change only the [specific element] to [new

element/description]. Keep everything else in the image exactly the same,

preserving the original style, lighting, and composition.

Prompt

"Using the provided image of a living room, change only the blue sofa to be

a vintage, brown leather chesterfield sofa. Keep the rest of the room,

including the pillows on the sofa and the lighting, unchanged."

Python

from google import genai

from PIL import Image

import base64

client = genai.Client()

living_room_image = Image.open('/path/to/your/living_room.png')

text_input = """Using the provided image of a living room, change only the blue sofa to be a vintage, brown leather chesterfield sofa. Keep the rest of the room, including the pillows on the sofa and the lighting, unchanged."""

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input=[living_room_image, text_input],

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("living_room_edited.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const imagePath = "/path/to/your/living_room.png";

const imageData = fs.readFileSync(imagePath);

const base64Image = imageData.toString("base64");

const input = [

{ inlineData: { mimeType: "image/png", data: base64Image } },

{ text: "Using the provided image of a living room, change only the blue sofa to be a vintage, brown leather chesterfield sofa. Keep the rest of the room, including the pillows on the sofa and the lighting, unchanged." },

];

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: input,

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("living_room_edited.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H 'Content-Type: application/json' \

-d "{

\"model\": \"gemini-3.1-flash-image-preview\",

\"input\": [{

\"parts\":[

{\"inline_data\": {\"mime_type\":\"image/png\", \"data\": \"<BASE64_IMAGE_DATA>\"}},

{\"text\": \"Using the provided image of a living room, change only the blue sofa to be a vintage, brown leather chesterfield sofa. Keep the rest of the room, including the pillows on the sofa and the lighting, unchanged.\"}

]

}]

}"

Input |

Output |

|

|

3. Style transfer

Provide an image and ask the model to recreate its content in a different artistic style.

Template

Transform the provided photograph of [subject] into the artistic style of [artist/art style]. Preserve the original composition but render it with [description of stylistic elements].

Prompt

"Transform the provided photograph of a modern city street at night into the artistic style of Vincent van Gogh's 'Starry Night'. Preserve the original composition of buildings and cars, but render all elements with swirling, impasto brushstrokes and a dramatic palette of deep blues and bright yellows."

Python

from google import genai

from PIL import Image

import base64

client = genai.Client()

city_image = Image.open('/path/to/your/city.png')

text_input = """Transform the provided photograph of a modern city street at night into the artistic style of Vincent van Gogh's 'Starry Night'. Preserve the original composition of buildings and cars, but render all elements with swirling, impasto brushstrokes and a dramatic palette of deep blues and bright yellows."""

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input=[city_image, text_input],

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("city_style_transfer.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const imageData = fs.readFileSync("/path/to/your/city.png");

const base64Image = imageData.toString("base64");

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: [

{ inlineData: { mimeType: "image/png", data: base64Image } },

{ text: "Transform the provided photograph of a modern city street at night into the artistic style of Vincent van Gogh's 'Starry Night'. Preserve the original composition of buildings and cars, but render all elements with swirling, impasto brushstrokes and a dramatic palette of deep blues and bright yellows." },

],

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("city_style_transfer.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H 'Content-Type: application/json' \

-d "{

\"model\": \"gemini-3.1-flash-image-preview\",

\"input\": [{

\"parts\":[

{\"inline_data\": {\"mime_type\":\"image/png\", \"data\": \"<BASE64_IMAGE_DATA>\"}},

{\"text\": \"Transform the provided photograph of a modern city street at night into the artistic style of Vincent van Gogh's 'Starry Night'. Preserve the original composition of buildings and cars, but render all elements with swirling, impasto brushstrokes and a dramatic palette of deep blues and bright yellows.\"}

]

}]

}"

Input |

Output |

|

|

4. Advanced composition: Combining multiple images

Provide multiple images as context to create a new, composite scene. This is perfect for product mockups or creative collages.

Template

Create a new image by combining the elements from the provided images. Take

the [element from image 1] and place it with/on the [element from image 2].

The final image should be a [description of the final scene].

Prompt

"Create a professional e-commerce fashion photo. Take the blue floral dress

from the first image and let the woman from the second image wear it.

Generate a realistic, full-body shot of the woman wearing the dress, with

the lighting and shadows adjusted to match the outdoor environment."

Python

from google import genai

from PIL import Image

import base64

client = genai.Client()

dress_image = Image.open('/path/to/your/dress.png')

model_image = Image.open('/path/to/your/model.png')

text_input = """Create a professional e-commerce fashion photo. Take the blue floral dress from the first image and let the woman from the second image wear it. Generate a realistic, full-body shot of the woman wearing the dress, with the lighting and shadows adjusted to match the outdoor environment."""

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input=[dress_image, model_image, text_input],

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("fashion_ecommerce_shot.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const imagePath1 = "/path/to/your/dress.png";

const imageData1 = fs.readFileSync(imagePath1);

const base64Image1 = imageData1.toString("base64");

const imagePath2 = "/path/to/your/model.png";

const imageData2 = fs.readFileSync(imagePath2);

const base64Image2 = imageData2.toString("base64");

const input = [

{ inlineData: { mimeType: "image/png", data: base64Image1 } },

{ inlineData: { mimeType: "image/png", data: base64Image2 } },

{ text: "Create a professional e-commerce fashion photo. Take the blue floral dress from the first image and let the woman from the second image wear it. Generate a realistic, full-body shot of the woman wearing the dress, with the lighting and shadows adjusted to match the outdoor environment." },

];

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: input,

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("fashion_ecommerce_shot.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H 'Content-Type: application/json' \

-d "{

\"model\": \"gemini-3.1-flash-image-preview\",

\"input\": [{

\"parts\":[

{\"inline_data\": {\"mime_type\":\"image/png\", \"data\": \"<BASE64_IMAGE_DATA_1>\"}},

{\"inline_data\": {\"mime_type\":\"image/png\", \"data\": \"<BASE64_IMAGE_DATA_2>\"}},

{\"text\": \"Create a professional e-commerce fashion photo. Take the blue floral dress from the first image and let the woman from the second image wear it. Generate a realistic, full-body shot of the woman wearing the dress, with the lighting and shadows adjusted to match the outdoor environment.\"}

]

}]

}"

Input 1 |

Input 2 |

Output |

|

|

|

5. High-fidelity detail preservation

To ensure critical details (like a face or logo) are preserved during an edit, describe them in great detail along with your edit request.

Template

Using the provided images, place [element from image 2] onto [element from

image 1]. Ensure that the features of [element from image 1] remain

completely unchanged. The added element should [description of how the

element should integrate].

Prompt

"Take the first image of the woman with brown hair, blue eyes, and a neutral

expression. Add the logo from the second image onto her black t-shirt.

Ensure the woman's face and features remain completely unchanged. The logo

should look like it's naturally printed on the fabric, following the folds

of the shirt."

Python

from google import genai

from PIL import Image

import base64

client = genai.Client()

woman_image = Image.open('/path/to/your/woman.png')

logo_image = Image.open('/path/to/your/logo.png')

text_input = """Take the first image of the woman with brown hair, blue eyes, and a neutral expression. Add the logo from the second image onto her black t-shirt. Ensure the woman's face and features remain completely unchanged. The logo should look like it's naturally printed on the fabric, following the folds of the shirt."""

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input=[woman_image, logo_image, text_input],

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("woman_with_logo.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const imagePath1 = "/path/to/your/woman.png";

const imageData1 = fs.readFileSync(imagePath1);

const base64Image1 = imageData1.toString("base64");

const imagePath2 = "/path/to/your/logo.png";

const imageData2 = fs.readFileSync(imagePath2);

const base64Image2 = imageData2.toString("base64");

const input = [

{ inlineData: { mimeType: "image/png", data: base64Image1 } },

{ inlineData: { mimeType: "image/png", data: base64Image2 } },

{ text: "Take the first image of the woman with brown hair, blue eyes, and a neutral expression. Add the logo from the second image onto her black t-shirt. Ensure the woman's face and features remain completely unchanged. The logo should look like it's naturally printed on the fabric, following the folds of the shirt." },

];

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: input,

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("woman_with_logo.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H 'Content-Type: application/json' \

-d "{

\"model\": \"gemini-3.1-flash-image-preview\",

\"input\": [{

\"parts\":[

{\"inline_data\": {\"mime_type\":\"image/png\", \"data\": \"<BASE64_IMAGE_DATA_1>\"}},

{\"inline_data\": {\"mime_type\":\"image/png\", \"data\": \"<BASE64_IMAGE_DATA_2>\"}},

{\"text\": \"Take the first image of the woman with brown hair, blue eyes, and a neutral expression. Add the logo from the second image onto her black t-shirt. Ensure the woman's face and features remain completely unchanged. The logo should look like it's naturally printed on the fabric, following the folds of the shirt.\"}

]

}]

}"

Input 1 |

Input 2 |

Output |

|

|

|

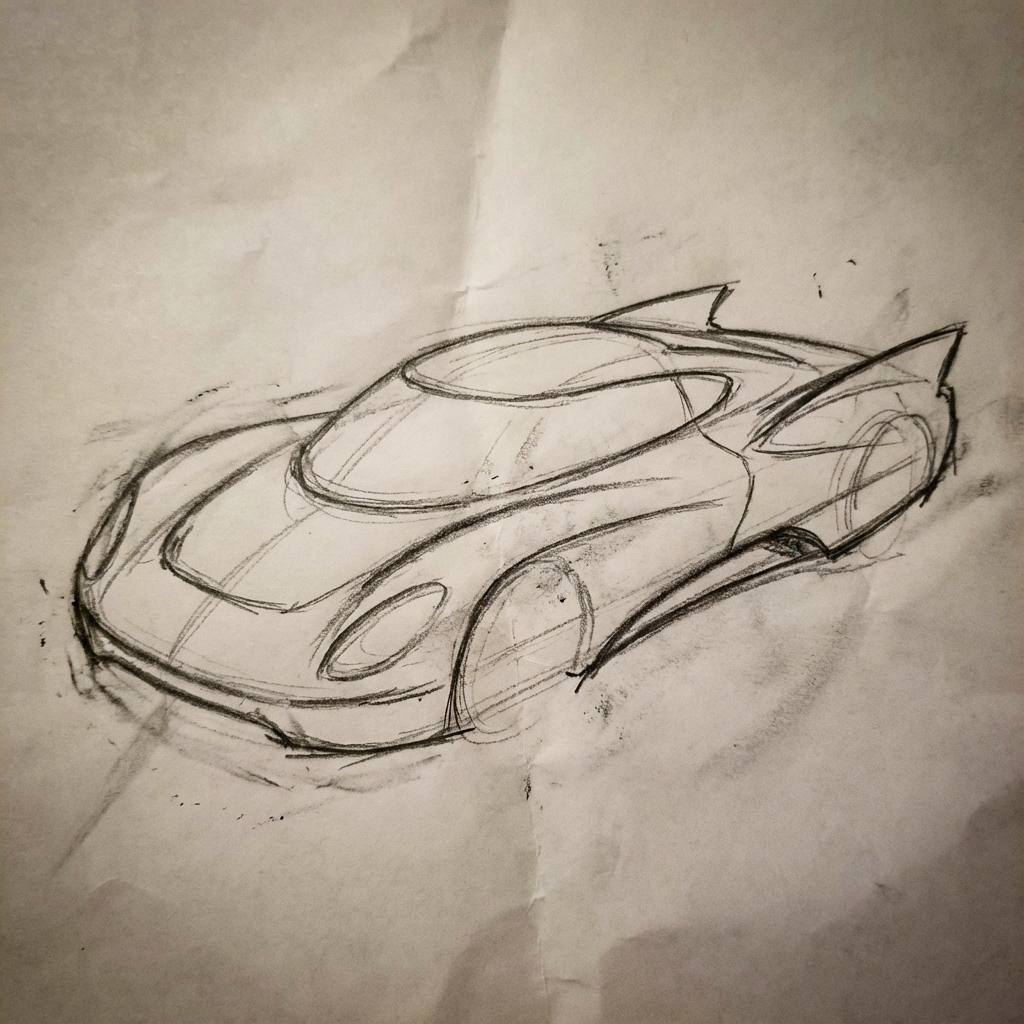

6. Bring something to life

Upload a rough sketch or drawing and ask the model to refine it into a finished image.

Template

Turn this rough [medium] sketch of a [subject] into a [style description]

photo. Keep the [specific features] from the sketch but add [new details/materials].

Prompt

"Turn this rough pencil sketch of a futuristic car into a polished photo of the finished concept car in a showroom. Keep the sleek lines and low profile from the sketch but add metallic blue paint and neon rim lighting."

Python

from google import genai

from PIL import Image

import base64

client = genai.Client()

sketch_image = Image.open('/path/to/your/car_sketch.png')

text_input = """Turn this rough pencil sketch of a futuristic car into a polished photo of the finished concept car in a showroom. Keep the sleek lines and low profile from the sketch but add metallic blue paint and neon rim lighting."""

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input=[sketch_image, text_input],

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("car_photo.png", "wb") as f:

f.write(base64.b64decode(content_block.data))

JavaScript

import { GoogleGenAI } from "@google/genai";

import * as fs from "node:fs";

async function main() {

const ai = new GoogleGenAI({});

const imagePath = "/path/to/your/car_sketch.png";

const imageData = fs.readFileSync(imagePath);

const base64Image = imageData.toString("base64");

const input = [

{ inlineData: { mimeType: "image/png", data: base64Image } },

{ text: "Turn this rough pencil sketch of a futuristic car into a polished photo of the finished concept car in a showroom. Keep the sleek lines and low profile from the sketch but add metallic blue paint and neon rim lighting." },

];

const interaction = await ai.interactions.create({

model: "gemini-3.1-flash-image-preview",

input: input,

});

for (const step of interaction.steps) {

if (step.type === "model_output") {

for (const contentBlock of step.content) {

if (contentBlock.type === "text") {

console.log(contentBlock.text);

} else if (contentBlock.type === "image") {

const buffer = Buffer.from(contentBlock.data, "base64");

fs.writeFileSync("car_photo.png", buffer);

}

}

}

}

}

main();

REST

curl -s -X POST \

"https://generativelanguage.googleapis.com/v1beta/interactions" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H 'Content-Type: application/json' \

-d "{

\"model\": \"gemini-3.1-flash-image-preview\",

\"input\": [{

\"parts\":[

{\"inline_data\": {\"mime_type\":\"image/png\", \"data\": \"<BASE64_IMAGE_DATA>\"}},

{\"text\": \"Turn this rough pencil sketch of a futuristic car into a polished photo of the finished concept car in a showroom. Keep the sleek lines and low profile from the sketch but add metallic blue paint and neon rim lighting.\"}

]

}]

}"

Input |

Output |

|

|

7. Character consistency: 360 view

You can generate 360-degree views of a character by iteratively prompting for different angles. For best results, include previously generated images in subsequent prompts to maintain consistency. For complex poses, include a reference image of the selected pose.

Template

A studio portrait of [person] against [background], [looking forward/in profile looking right/etc.]

Prompt

A studio portrait of this man against white, in profile looking right

Python

from google import genai

from PIL import Image

import base64

client = genai.Client()

image_input = Image.open('/path/to/your/man_in_white_glasses.jpg')

text_input = """A studio portrait of this man against white, in profile looking right"""

interaction = client.interactions.create(

model="gemini-3.1-flash-image-preview",

input=[text_input, image_input],

)

for step in interaction.steps:

if step.type == "model_output":

for content_block in step.content:

if content_block.type == "text":

print(content_block.text)

elif content_block.type == "image":

with open("man_right_profile.png", "wb") as f: